In part one of this two-part series, I described what we know about the September 14 attack against the drug sites on the Tor network. To review:

- The attack simultaneously took down 11 drug sites on the dark web, yet traffic patterns were unaffected.

- The site administrators indicated a problem on a public forum; and

- There was no discernible traffic anomaly.

What follows is what I have deduced from the attack along with a few quizzical thoughts about possible perpetrators. As I viewed the attack method, I am certain that this attack was an application-level attack. In this case, the application being attacked is in the web server. What this means is that the attacker flooded the markets’ web servers with requests, making them work to process the request. If there are enough of these requests, the web server will begin to fail to respond to legitimate requests resulting in a denial-of-service to users. There are a few reasons that lead me to this conclusion.

- It is unlikely that somehow the attacker found the real IPs of all of the targets. If that were true, the attacker would have far better things to do than just DDoS drug markets. This would mean they found an extremely valuable vulnerability in ToR.

- There is no sign of the attack being a network-level attack. Were this attack a network-level attack, the bandwidth anomalies would have reflected that. An application-level attack would use very little bandwidth in comparison to a network-level attack

- An application-layer attack is uniquely effective against TOR hidden service sites.

Typically, when a site is experiencing a layer 7 DDoS attack, the response is something like this:

- Find the IPs that are sending the malicious traffic (roughly).

- Block the malicious IPs at the network level (preferably as far upstream as possible).

- Refine the list of blocked IPs so that legitimate users are not blocked.

In the case of a Tor hidden service site, this falls apart. With Tor, everyone's traffic is coming from one of a few thousand Tor nodes, so the attacker and legitimate user may, in fact, be connecting to a site at the same time with the same IP address. This means that to block the attack, one would have to look at the request and filter every request that goes to the site and somehow separate good from bad. This really isn’t useful because an application still must process all the requests. This just changes where the bottleneck forms unless a hardware filter is in place. Some inherent qualities of Tor would also complicate implementing such a device.

Who dunnit?

I surmise few possible culprits to this attack. DDoS attacks are not typically considered that complex. The actual mechanics behind DDoS attacks are straightforward most of the time. It’s essentially just “Send requests or data to this address and don’t stop doing that.” However, an attack that overwhelms 11 well-funded entities that are spending significant resources to try to stay online would likely require one or two technically skilled people to leverage unique aspects of the hidden service system and overwhelm it. Could the culprits be one of the following entities?

- A government body definitely has good motive and resources to carry out this attack. This would also make sense as a continuation of past attacks against drug markets by governments, most specifically the recent sting operations against Alphabay and Hansa markets. Yet no government body has come forward to claim responsibility for these attacks.

- Someone trying to take down the competition. This seems more likely. The primary issue with this is that no new market has sprung up in the wake of these attacks. At first, there were a few small markets still online. These markets were later taken offline as they gained users. It is reasonable to think that this was the original reason for these attacks, and as the attackers saw new markets gaining traction, they began attacking those markets as well. The theory of retaliatory attacks is aided by a view of the attacks as very low bandwidth attacks that use a small number of devices. If the attacks were normal http floods, then it would likely require thousands of devices and custom malware to run on those devices.

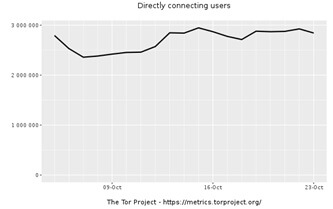

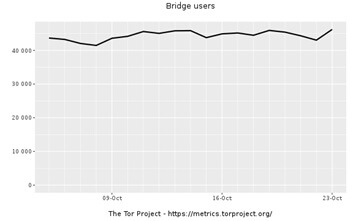

As further evidence to support this, the Tor metrics do not show any massive spikes in user count. However, this fact is misleading due to the volatility of the Tor user statistics and how they may be affected by the attacks. (Fewer people may use Tor when markets are offline, while more people may use Tor to check if the markets are online.) Additionally, there were about three million people connected to the Tir network, so finding a small botnet that would have joined the network is rather challenging because there is too little signal and too much noise.

What are we to take away from this? The most interesting thing and the thing that we will remember is that people who we typically consider the bad guys now have the problems of the “good guys”. Typically, in security, the bad guys have the advantage. They only have to find one meaningful flaw, and the good guys have to prevent every possible meaningful flaw. Since I surmise that these attacks are not being carried out by law enforcement, there is no doubt that law enforcement will begin to use similar tactics against similar sites. In short, life is about to get much easier for law enforcement because HUMINT is expensive and hard, whereas SIGINT is easy, cheap, and low risk. As the bad guys generate more signals, the good guys will be able to do more signals intelligence. This is an inherently losing proposition for the bad guys due to the inherently asymmetrical nature of security.

About the Author: Nick McKenna is a student researcher who has had an interest in cyber security for the past five years. Nick likes seeing how things work and trying to break them. If you have any questions, you can contact Nick here. Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.