“Send it to the cloud” has been the increasingly common response over the years for dealing with the issue of how to handle massive amounts of data. On one side, I understand it. Another infrastructure owned by a third party who has teams dedicated to implementing security by design, continuous testing and validation – this all sounds attractive.

However, what many clients don’t realize is that whilst the third party’s infrastructure is secured and regularly tested, your implementation of the environment is not. Take AWS, one of the popular cloud service and infrastructure providers, for example. They work on a Shared Responsibility Model. Their (AWS) backend is secured, but the client is responsible for the configuration of their own environment, services and even encryption setting.

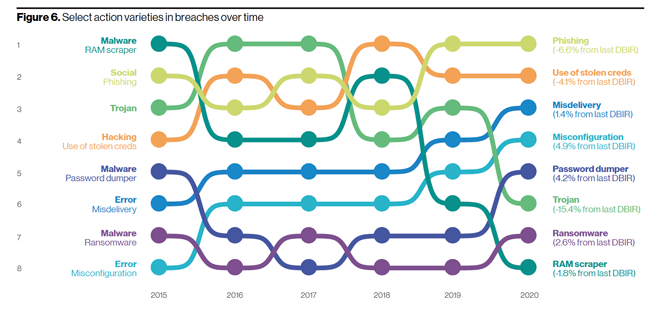

If we look at the 2020 Verizon Data Breach Investigation Report (DBIR), we see that misconfigurations saw a 4.9% increase from the 2019 report. It's been on the rise since 2017.

2020 Verizon Data Breach Investigations Report – Actions in Breaches over time

If you’re following breach media at all, you have likely come across at least one database with unauthorized access due to no password being required. How could they be so foolish?

It goes back to the Shared Responsibility Model that essentially all cloud providers have.

- Was the deployment team educated on security configuration requirements?

- Did anyone check what the default configuration is or how it should be implemented?

- Was a Data Protection Impact Assessment (DPIA) done?

- Did they do a security assessment during the testing phase?

Cloud deployments aren’t reducing your responsibilities of data protection. They’re increasing your attack surface and threat landscape of data you’re still responsible for protecting. Whilst it might reduce other costs, it's important to do a proper risk and cyber security assessment of the solution prior to launching. Otherwise, you may end up spending the ‘cost savings’ in security add-ons or incident response.

Right now, many of us are working within a forced remote environment. For some, this is a positive. There’s even a few saying they have more time! However, for others, this has been a hard transition, especially those supporting the rapid deployment of remote technical support. Rapid transition in an emergency is one thing, but when you’re asked to migrate to a cloud infrastructure, proper planning and requirements gathering is a must.

Context is everything

When looking at new solutions, I always start with what currently is implemented within the environment from the SIEM to patch management, vulnerability management, MDM and more. Then I consider what the requirements and workflows are. This is all so I can put context to the solution.

Watching demos can be brilliant – highlighting all the capabilities and integration. But without the above knowledge, I’m not able to ask intelligent focused questions, and I can't be aware if this solution is going to enhance or hinder our environment.

For example, pretend you need to deploy a new patch management solution that has an integrated remote technical support option. You demo products A, B and C, and then you select product A due to what it offers, but during the implementation phase, you realized this solution is not going to work. The investigation/requirements gathering never flagged your theoretical environment for having Linux servers and macOS end devices, which product A doesn’t support. Time and cost are wasted by an issue that could have easily been addressed by an initial question email to the companies in the first place.

When looking to review the current maturity of your environment, in order to focus on enhancing this holistic program, seven questions you can ask are:

- What integrations are required?

- What is the capability of the operations team? i.e. Look at their experience, expertise, and make note of knowledge gaps for possible training.

- What is the organization’s inherent risk? i.e. risk without the controls in place.

- What are likely scenarios? i.e. likely attacks, types of malicious actors, motivations, etc.

- What compliance requirements are needed?

- What does the current program already cover?

- What is the available budget, and is the team able to properly build it?

Intelligence of your environment

NIST Cyber Security Framework

As we know from the 2020 DBIR, 55% of all breaches were from organized criminal groups, and 86% were from financially motivated actors. Knowing your inherent risk, likely attackers and their expected attacks is a brilliant way to use your existing knowledge to enhance the security posture.

Add into this environment intelligence alignment with the different areas of cyber security programs, i.e. identify, protect, detect, respond, recover. Then finish it off by looking at the baseline of your environment and creating a recipe for proactive security measures tailored to the business needs.

Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.

Meet Fortra™ Your Cybersecurity Ally™

Fortra is creating a simpler, stronger, and more straightforward future for cybersecurity by offering a portfolio of integrated and scalable solutions. Learn more about how Fortra’s portfolio of solutions can benefit your business.