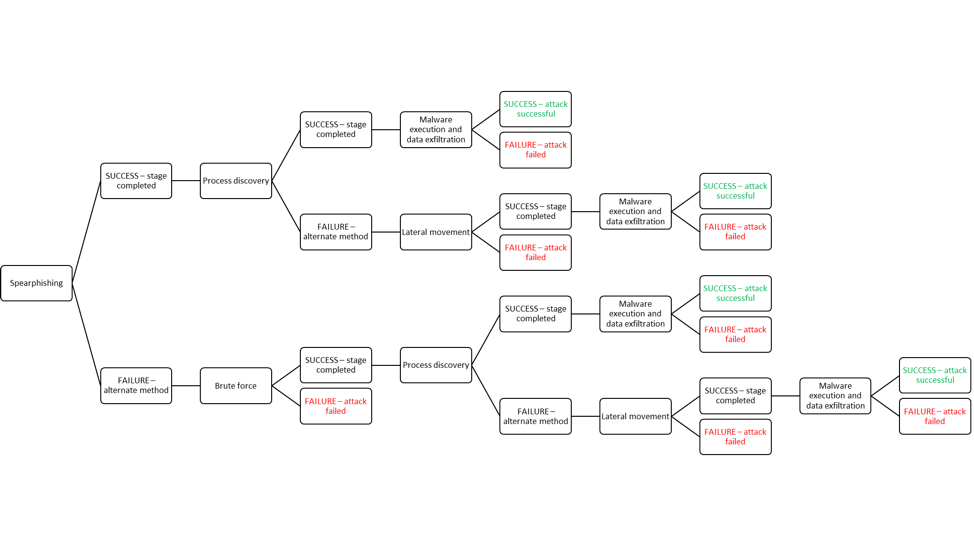

Cybersecurity is generally considered to be a highly reactive field where professionals struggle to keep up with new and emerging threats. As the profession works to become more human-centered and proactive, I have attempted to design a new modeling process that is highly pertinent to these emerging priorities. It combines the existing conceptual, high-level research in economics regarding cost/benefit analysis of threat actors and the static and generalized models currently used in threat analysis. The initial step of developing this framework required extensive searching for information on the threat actors whose behavior is to be modeled. Once an organization has identified pertinent threats in its sector, it must appropriately identify, categorize and research these threats to understand the types of attacks for which they are known. From this, the decision tree can be developed. The first branch of the decision tree is the most common initial attack vector for the threat actor. This is written in the uncertainty format of “Success” or “Failure,” and subsequent steps are determined from there. For example, if a “Success” occurs, then the attacker moves on to other steps of the attack process. However, if the attempt is a “Failure,” it does not mean the attack has failed and the threat ended. The attacker may have only one preferred point of entry, or they may have an arsenal of attack methods with which to make the initial breach. Once the initial branch is made, subsequent branches are added, considering the possible actions a threat actor may take once the initial foothold is gained. For some threats, this may be a very straightforward process if only one MO tends to be used. However, the novelty of this framework exists in its flexibility to consider the actions of the attacker if one of these steps fails. For example, if they are unable to detect an existing process, it does not mean the attack has failed; instead, they may move laterally and search another account or even another system for processes to run their malware. Once the process is walked through to the end, the tree is rolled back to find the critical points at which a “Success” means the attack has been successful (highlighted in green) and where a “Failure” means the attack has failed (highlighted in red). The example model shown here was made based on the actions of the Turla group. This is a Russian group that has been widely analyzed and tends to have a narrow attack vector.

Once organizations have determined which threat actors are of greatest concern, this framework can be used by decision-makers to determine the highest priority areas of mitigation and monitoring. Application of this research can allow an organization to “personalize” their practices based on information specific to their sector, geographic area, and previous threat history. However, decision-makers must take care not to overfit such approaches and overlook vulnerabilities that may be exploited by an unexpected threat actor. While this framework can be used to help prioritize action areas, it does not replace general risk assessment considering areas of low attack probability but high impact. In the future, I hope to expand this research by collaborating with a researcher with a mathematics background to quantify and validate the benefit received from any risk management decisions that are informed by this model. In addition, this methodology could benefit from application to live data of an actual attack scenario whether through data collected from an actual breach or from a penetration test in which a specific threat actor is simulated. I will be discussing this work in further detail and presenting some more complex diagrams at BSides Las Vegas, which takes place on August 7th and 8th.

About the Author: Emily Shawgo has recently graduated from Carnegie Mellon University with a master’s degree in Public Policy and Management with a concentration in Cybersecurity Management. She also has an undergraduate degree in Psychology and Political Science from Carlow University. Emily’s interests lie in penetration testing, threat analysis, and applying the study of human behavior to the field of cybersecurity. Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.