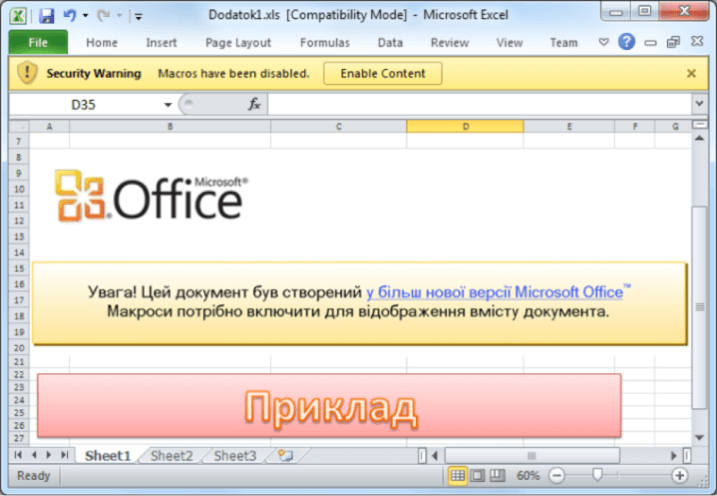

In our previous article, we started to lay out some important social engineering terms, such as phishing, spear-phishing and pretexting. We even introduced to you what we call “Potentially Unwanted Leaks” (PUL) as tidbits of information that, when out in the wild, become valuable nuggets to be used against you in a social engineering attack. This last installment in our ICS/SCADA series shows how social engineering was used to cause a blackout, the first known case of a cyberattack being directly responsible for a power outage. On December 23, 2015, at 3:35 pm local time, in Ivano-Frankivsk Oblast (a southwestern region of the Ukraine that borders Romania and is in close proximity to the borders of Hungary, Slovakia, and Poland), seven 110 kV and twenty-three 35kV substations were disconnected for three hours. The power outage, which took out 30 substations, could have impacted up to three different energy distribution companies, causing 225,000 customers to lose power. Shortly thereafter, Ukraine’s SBU state security service responded by blaming Russia, not an unreasonable assertion given that plenty of lead time was required to conduct this operation. How was this allowed to happen? Social engineering is how. It all started with a spear-phishing attack using spoofed address that made it seem as though the emails were coming from the Rada, the Ukrainian parliament. Rejecting such an email is always a tough proposition for any employee, and in certain social structures, ignoring an email from parliament could result in some unpleasant misfortune. So, what happens? The employees open up the email, open an attachment, allow a macro to compile, and all nastiness breaks loose. After opening the email, using a manipulate Office document (screen capture below, credit: CyS Centrum), the user is asked to allow a macro to compile. Here comes the nastiness: variants of the BlackEnergy malware start to infect the system. This social engineering step is crucial for the attackers to gain a foothold in the system. And this is exactly what happened over the next six months.

Once the malware was on the system, authorized users started to lose control of administrative passwords and privileges, leading to even bigger problems like allowing the attackers to make their way to critical systems, such as Uninterruptable Power Supplies (UPSs) and supervisory control systems like Human Machine Interfaces (HMI). And just to be sure, a variant of the KillDisk malware was installed onto the systems, as well, potentially as a means to hide the tracks of the malicious user.

The Simplest Solution May Be Most Effective

What’s the lesson here for ICS/SCADA systems? Well, it is that the threat is at our doorstep, and sometimes it is as simple as asking: should I click on the link or open the attachment? Our general view is, if in doubt, don't! Cats have nine lives to be curious with – your computer systems and devices don’t. You see, our view is that if you can thwart the initial phishing, spear-phishing, or pretexting attacks, the likelihood of a successful attack greatly diminishes. Admittedly, it is only a theory we have and one that can only be proven with an incredible amount of red team testing to be proved right. But there are strong indicators to support our theory. For example, the Verizon DBIR noted in our previous installment that phishing and pretexting combined represented almost 98 percent of incidents and breaches that involved social action. From the same report, 88 percent of pretexting attacks were being carried out via email. We’re willing to put some money on the fact that there’s a fire with all this smoke. This is why we believe that the first critical step to protecting the grid is employee training on social engineering attacks and social media use. We list a few other issues below, but really, without employee training, we see this as a loss right out of the gate. It’s like trying to win a football game by only throwing passes for 20 yards. Sure, you’ll make the occasional big gain, but you’re leaving easy yards on the field for no good reason. And the defense will know to always play zone against you. It’s just a matter of time before you lose and lose often if you stick with the same failed strategy.

How to Stay in the Game

Here are our quick tip employee training suggestions that we feel will give you a fighting chance to not only stay in the game but even win:

1. Have real and on-going employee training.

“One-offs” online sessions that an employee does during a 10-minute coffee break are great for the vendors. Not so great for you. Spotting suspicious emails is an exercise in muscle memory. Find a provider that can specifically tailor a training program to your plant or facility and make the training ongoing. There’s plenty of evidence that this strategy can substantially reduce your risk.

2. New York City mantra: see something, say something.

Let your IT know if you’re seeing suspicious emails or if you feel you’re caught up in a social engineering attack. Have the ability to track logs if something feels off, as this information is vital to threat intelligence gathering. By letting your IT department know something feels off, some easy adjustments to filters can do the trick. In other cases, your heads up could help the IT department block a potentially bad IP address from trying to communicate with the enterprise.

3. Sharing is caring.

Criminals move within industry verticals, so even working with competitors here can make the industry safer as a whole. You can still be business competitors and still share threat intelligence under the Cybersecurity Information Sharing Act.

4. Pick up the phone.

If you’re unsure an email is legitimate, take the 30 seconds to call your colleague, friend, or family member and say, “did you really send me this?” That call could save you millions of dollars, your job, and avoid an avalanche of bad PR.

5) Red team test your employees on a regular basis.

Better to have a good learning experience and adjust accordingly instead of having to publicly say “Senator, we didn’t really plan for this even though we knew this type of threat existed.” What do all points have in common? They’re all about you. Social engineering is about you and getting at you at a personal level. And before people start jumping up and down that we are just trying to spread paranoia, ask yourself, what’s easier: some sophisticated computer attack or fooling somebody with a pressure scam? Criminals go for the path of least resistance. If you’ll notice, many of the suggestions we made are cost-effective and easy to implement. We’re trying to “up your cyber street smarts” game here.

Extra Time: Some Bonus Tips

While the point of this series was to get you thinking about social engineering and what impact they could have on ICS/SCADA systems, we have some other quick tips worth considering. They are:

1. Review ICS/SCADA security architecture.

Use experienced and qualified ICS security professionals to review network architecture, VPN configuration, firewall placement, router controls, and all that other tech garble you may not understand but is really important. There may be gaps there that need to be filled.

2) Enhance network security monitoring capabilities.

Yes, some attacks are sophisticated, deliberate, and operate in stealth. You need robust log collection and networking traffic monitoring. Failure to perform these essential tasks prevents timely detected, pre-emptive response, and accurate incident investigation. Artificial Intelligence and Machine Learning will likely play an increased role here over time, but you still need a human at the helm to keep an eye on what is going on.

3) Review and update incident response, business continuity, and crisis communication plans.

Utilities are used to outages and are very capable of responding to those caused by weather or equipment failure. There has been a lot of practice and lessons learned here, but cyber threats are a new animal and need more of our attention as a normal course of business. Plans need to cover nightmare scenarios like protocols for wiper malware and ransomware. About the Authors: Paul Ferrillo

is counsel in Weil’s Litigation Department, where he focuses on complex securities and business litigation, and internal investigations. He also is part of Weil’s Cybersecurity, Data Privacy & Information Management practice, where he focuses primarily on cybersecurity corporate governance issues, and assists clients with governance, disclosure, and regulatory matters relating to their cybersecurity postures and the regulatory requirements which govern them. George Platsis

has worked in the United States, Canada, Asia, and Europe, as a consultant and an educator and is a current member of the SDI Cyber Team (www.sdicyber.com). For over 15 years, he has worked with the private, public, and non-profit sectors to address their strategic, operational, and training needs, in the fields of: business development, risk/crisis management, and cultural relations. His current professional efforts focus on human factor vulnerabilities related to cybersecurity, information security, and data security by separating the network and information risk areas. Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.