Defensible and defended are not the same thing. There are characteristics of an environment that make it more or less defensible. While IT and OT environments both have some mixed results, in general, OT environments are more defensible than IT environments. My hypothesis, as a reminder, is that a more defensible network is one in which currently unknown attacks can be more easily thwarted in the future. If any of that seems crazy or wrong, go read my earlier post on defensibility for context. At this point, we're left to explore how to move from defensible to defended.

Moving from Defensible to Defended

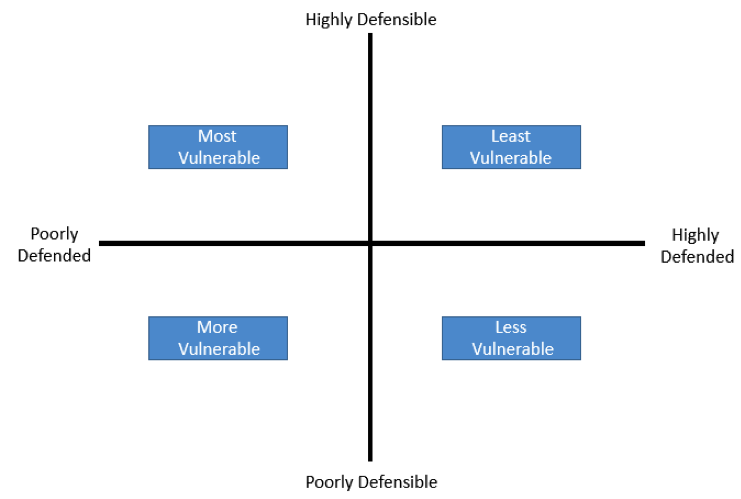

It's tempting to label this the "OT problem," but the principles aren't OT specific. Any environment that needs to be defended can benefit from applying these categories of controls with these objectives. The issue with a defensible, but not defended, environment is that it's vulnerable. That may seem overly simplistic to point out, but it's worth a sentence or two. Let me explain with a chart:

This should be mostly self-explanatory, except that you might not expect a highly defensible, but poorly defended environment to be the most vulnerable. A highly defensible environment is likely to be more vulnerable when not defended due to the relative simplicity of the architecture. The characteristics that make it easier to defend also make it easier to attack successfully when not defended. To explain with an example, it's easier to monitor an environment with operational predictability, but it's also easier for an attacker to determine what's in the environment and what those assets do. There is some security gained through complexity, but we've also demonstrated over and over again that it's not sufficient for actual defense. In order to move from a defensible environment to a defended one, let's take a principled approach to the problem. It's easy to read the blog-o-twitsphere and gain a deep desire to acquire the latest shiny security object, but it's more worthwhile to start by examining where you stand on some basic principles first. I tend towards objectives and measurements, but that becomes heavy-handed pretty quickly. Instead, just consider how you would answer these questions: Can you identify all of the assets on your network? Can you identify when a configuration or asset changes in your environment? How quickly can you determine if the change is malicious? Can you identify anomalous traffic on your network? How quickly can you determine if the traffic is malicious? If you throw a virtual dart at a network map, how quickly could you isolate that device, system, or business process? In the last few incidents you had, did you have all the information necessary for the investigation? What took the longest to obtain? These questions are oriented around the principles of inventory, visibility to change, effective control points, and incident response and forensics. These are not intended to be a comprehensive set of information security principles or best practices; there's lots of material out there for that. Instead, questions like these can be used as grounding material, as in "Why do we need AI-based sandboxing technology if we can't tell when a new host joins the network?" or "Why would we implement full packet capture when it takes us two weeks to pull basic logs from our systems?" In other words, start with basics, then move to advanced. Try not to get distracted by shiny objects. For OT environments specifically, there are some great, relatively simple possible steps forward:

- Implement tools to inventory the environment; use them to identify when something changes.

- Add basic network security monitoring (DNS traffic, top talkers, etc).

- Centrally collect logs for analysis. Create some correlation rules to identify interesting events.

While these aren't rocket science ideas, they provide tangible benefits for information security in any environment. More importantly, there's a reasonable chance you can do most of this with tools you already have, or with open-source. If you work in an environment that's subject to NERC CIP, you can probably extend some of the tools you have for CIP compliance to do more security-centric tasks as well.

Defensible Environments and Unknown Attacks

Let's return to the hypothesis and see what we can conclude. I'm going to broadly define an 'unknown attack' as one that isn't caught by any current detection system designed to catch that category of attack. For example, a virus for which no signature exists, or a piece of malware that fools a sandbox, etc. The point is that any system designed to detect attacks can be circumvented with enough resources. If we accept the premise that a defended environment has a limited attack surface, more fit-for-purpose systems, simple control points, more least privilege access, better operational predictability, and better resiliency, then we can examine how these characteristics contribute to detecting unknown attacks. Every attack changes something, from network traffic and open ports, to file counts and hashes. An environment with fewer working parts makes these changes more noticeable assuming someone is actually watching. That’s the tricky part. A defensible environment is no better able to detect or defend an unknown attack if no one is looking at the data. However, an environment that is both defensible and defended has huge advantages over a modern, complex IT environment. Fewer changes mean that unauthorized changes are more easily identified. Fewer open ports means that a new open port is easier to see. A defensible environment may not be inherently more secure, but understanding how defensible your environment is can definitely make security more effective when implemented. Title image courtesy of ShutterStock

Mastering Security Configuration Management

Master Security Configuration Management with Tripwire's guide on best practices. This resource explores SCM's role in modern cybersecurity, reducing the attack surface, and achieving compliance with regulations. Gain practical insights for using SCM effectively in various environments.