The Case for Privacy Risk Management

Over the past few years, there has been a massive cultural and legal shift in the way consumers view and secure their personal data online that's in line with the rise of advanced technologies like artificial intelligence. Concerned by an increasing rate of incidents that range from the 2017 Equifax hack to the scandalous Cambridge Analytica gaming of consumers’ social media data for political purposes, policymakers have begun to strike back on consumers’ behalf. Europe’s General Data Protection Regulation (GDPR), the landmark privacy legislation that went into effect May 2018, was the first large-scale effort to offer consumers more legal protections. Given the absence of a comprehensive federal privacy law, the California Consumer Privacy Act (CCPA), which will come into force on 1 January 2020, marks the first similar step in the United States. Similar laws are being pursued in a handful of other states. For more than two decades, the Internet and associated information technologies have driven unprecedented innovation, economic value and access to social services. Many of these benefits are fueled by data about individuals that flow through a complex ecosystem—so complex that individuals may not be able to understand the potential consequences for their privacy as they interact with systems, products and services. Organizations may not fully realize the consequences, either. Failure to manage privacy risks can have direct adverse consequences for people at both the individual and societal level, with follow-on effects on organizations’ reputation, bottom line and prospects for growth. Finding ways to continue to derive benefits from data while simultaneously protecting individuals’ privacy is challenging and not well-suited to one-size-fits-all solutions. According to Bernhard Debatin, an Ohio University professor and director of the Institute for Applied and Professional Ethics, the first problem is that there has never been a clear—or enforceable—definition of privacy since it is such a complex and abstract concept. “The notion of privacy has changed over time,” says Debatin. “In post-modern, information-based societies, the issue of data protection and informational privacy has become central, but other aspects [such as old-school, bodily privacy] still remain relevant. In other words, over time, the concept of privacy has become increasingly complex.” This broad and shifting nature of privacy makes it difficult to communicate clearly about privacy risks within and between organizations and with individuals. What has been missing is a common language and practical tool that is flexible enough to address diverse privacy needs.

Development of NIST Privacy Framework

The National Institute of Standards and Technology (NIST) has developed the NIST Privacy Framework: A Tool for Improving Privacy through Enterprise Risk Management (Privacy Framework) to drive better privacy engineering and help organizations protect individuals’ privacy by:

- Building customer trust by supporting ethical decision-making in product and service design or deployment that optimizes beneficial uses of data while minimizing adverse consequences for individuals’ privacy and society as a whole,

- Fulfilling current compliance obligations as well as future-proofing products and services to meet these obligations in a changing technological and policy environment, and

- Facilitating communication about privacy practices with customers, assessors and regulators.

The development of the Privacy Framework was a collaborative process that began in September 2018. After two public comment periods, three public workshops and five webinars, the Preliminary Draft of the Privacy Framework was released in September 2019. The development process was a dynamic one, and the development team tried to incorporate all views.

Definition of Key Terminology

The Privacy Framework follows the structure of the NIST Cybersecurity Framework to facilitate the use of both frameworks together. From the early stages of the development, it became apparent that there was a need to develop a privacy risk vocabulary to form a common ground of understanding. Therefore, the terms “data,” “data action,” “data processing” and “privacy risk” were defined as follows:

- Data: A representation of information including digital and non-digital formats.

- Data Action: A system/product/service data lifecycle operation including but not limited to collection, retention, logging, generation, transformation, use, disclosure, sharing, transmission and disposal.

- Data Processing: The collective set of data actions.

- Privacy Risk: The likelihood that individuals will experience problems resulting from data processing and the impact should they occur.

Cybersecurity Risks vs. Privacy Risks

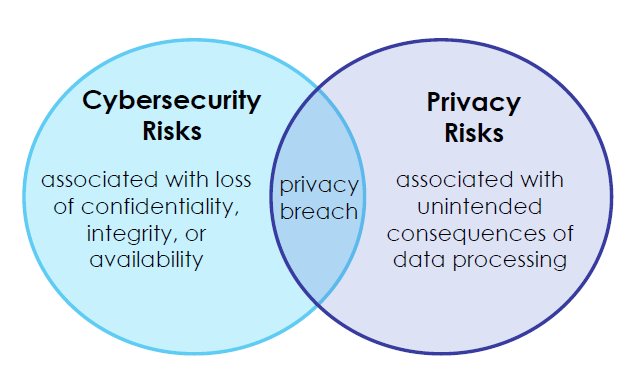

Another area that needed to be clarified was the difference between cybersecurity risks and privacy risks. Security risks are related with threats and vulnerabilities as well as the loss of confidentiality, integrity and availability. By contrast, Naomi Lefkovitz points out that privacy risks are associated with unintended consequences of data processing which are often caused by the way designers architect systems, even secure ones. Operations that process personally identifiable information could pose privacy threats. "When security people think about threats, they think of bad actors or events out of their control, such as natural disasters," Lefkovitz says. "But [in regard to privacy], it's the operation of the system that's giving rise to the risk." As an example, Lefkovitz points to smart metering of electricity. The systems can be built securely to protect the private information collected, but the data itself can reveal people's behavior inside their homes. In addition, security tools designed to safeguard personal identifiable information (PII) from malicious actors, such as persistent activity monitoring, could reveal information about individuals that is unrelated to cybersecurity. However, cybersecurity and privacy risks overlap as the following Vern diagram displays.

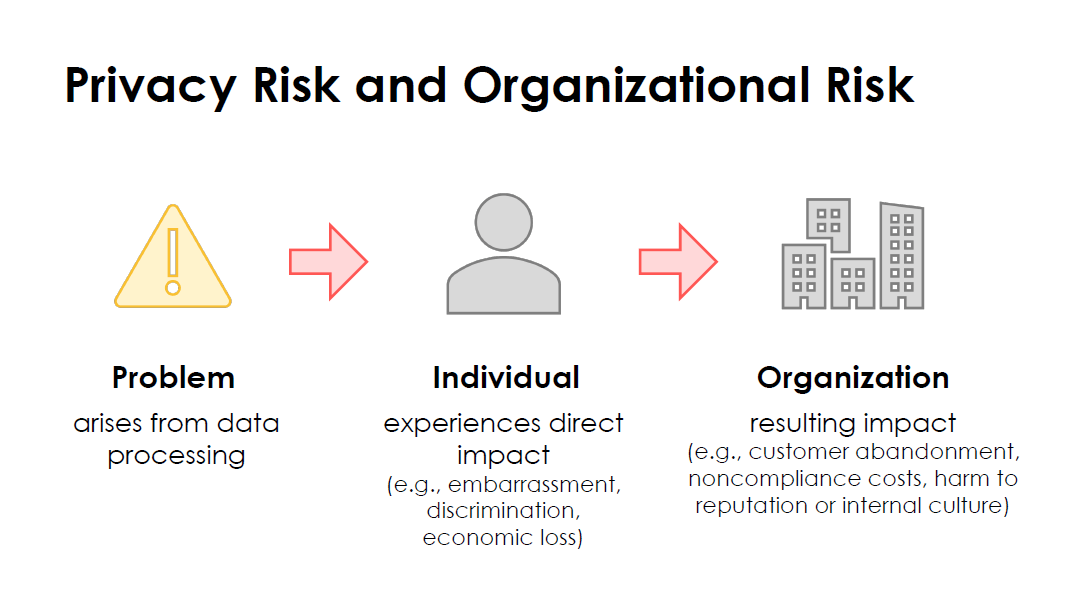

Individuals can experience problems as a result of problematic data action which may range from dignity-type effects such as embarrassment or stigmas to more tangible harms such as discrimination, economic loss or physical harm. However, these problems also can arise from privacy breaches where there is a loss of confidentiality, integrity or availability at some point in the data processing, such as data theft by external attackers or the unauthorized access or use of data by employees who exceed their authorized privileges. As a result of the problems individuals experience, an organization may also experience impacts such as noncompliance costs, customer abandonment of products and services or harm to its external brand reputation or internal culture. These organizational impacts can be drivers for informed decision-making about resource allocation to strengthen privacy programs and to help organizations bring privacy risk into parity with other risks they are managing at the enterprise level.

Privacy Risk Management and Assessment

The Privacy Framework defines privacy risk management as “a cross-organizational set of processes that helps organizations to understand how their systems, products, and services may create problems for individuals and how to develop effective solutions to manage such risks.” In addition, privacy risk assessment is defined as “a sub-process for identifying, evaluating, prioritizing, and responding to specific privacy risks.” Privacy risk assessments can help organizations to weigh the benefits of the data processing against associated risks and to determine the appropriate response. “Risk assessment and risk management are still challenging tasks given that there is not an industry-standard definition of risk nor a common, accepted means of quantifying IT risk. This is especially true of privacy where the laws are still evolving and haven’t had much time to build up court precedent,” says Anthony Israel-Davis, Senior Manager SaaS Ops at Tripwire. Privacy risk assessment is an important process because privacy is considered in many laws as a fundamental human right and a condition that safeguards multiple values. Finally, privacy risk assessments help organizations distinguish between privacy risk and compliance risk. Identifying if data processing could create problems for individuals, even when an organization may be fully compliant with applicable laws or regulations, can help with ethical decision-making in system, product and service design or deployment. Privacy risk assessments are the basis for a privacy-by-design product or service development.

Privacy Framework Structure

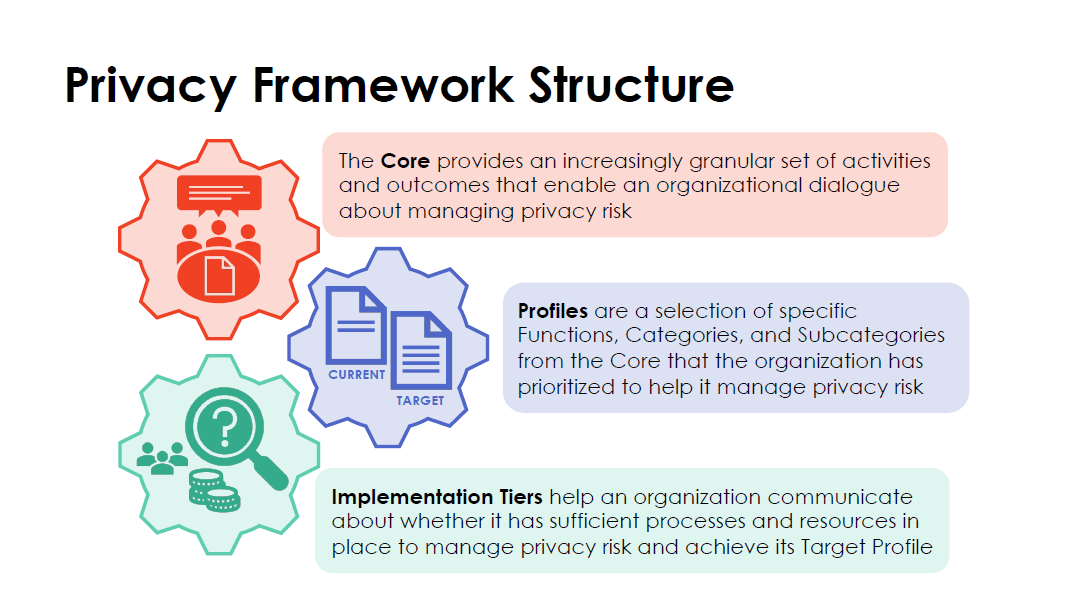

The Privacy Framework is comprised of three parts, namely the Core, Profiles and Implementation Tiers, as illustrated below.

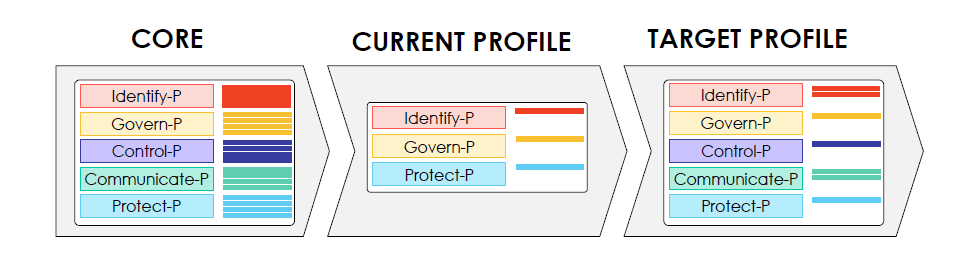

The Core is comprised of five Functions:

- Identify-P,

- Govern-P,

- Control-P,

- Communicate-P and

- Protect-P.

The first four functions can be used to manage privacy risks arising from data processing, while Protect-P can help organizations manage privacy risks associated with privacy breaches. Protect-P is not the only way to manage privacy risks associated with privacy breaches. Organizations may use the Cybersecurity Framework Functions in conjunction with the Privacy Framework to address privacy and cybersecurity risks. The Core is further divided into key Categories and Subcategories for each Function. A Profile represents the organization’s current privacy activities or desired outcomes. To develop a Profile, an organization can review all the Functions, Categories and Subcategories to determine which are most important to focus on based on business/mission drivers, types of data processing and individuals’ privacy needs. Profiles can be used to identify opportunities for improving privacy posture by comparing a “Current” Profile (the “as is” state) with a “Target” Profile (the “to be” state).

Implementation Tiers provide a point of reference on how an organization views privacy risk and whether it has enough processes and resources in place to manage that risk. Tiers reflect a progression from informal, reactive responses to approaches that are agile and risk-informed.

Conclusion

Reviewing the NIST Privacy Framework, Anthony Israel-Davis, Senior Manager of R&D at Tripwire, commented that,

the NIST Privacy Framework does a good job of making the case for privacy risk management and a flexible means by which an organization can assess its own privacy posture and develop a program to improve it. Still in draft form, it’s a bit wordy and could use an editor’s pen to help it become more succinct. The most helpful portions are in the appendices where the framework is detailed and from which a flexible assessment tool could be built. Privacy is becoming a paramount concern with real legal and financial risk, any tool that helps an organization manage that risk is a welcome addition and I look forward to a finalized version of the NIST framework.

Meet Fortra™ Your Cybersecurity Ally™

Fortra is creating a simpler, stronger, and more straightforward future for cybersecurity by offering a portfolio of integrated and scalable solutions. Learn more about how Fortra’s portfolio of solutions can benefit your business.