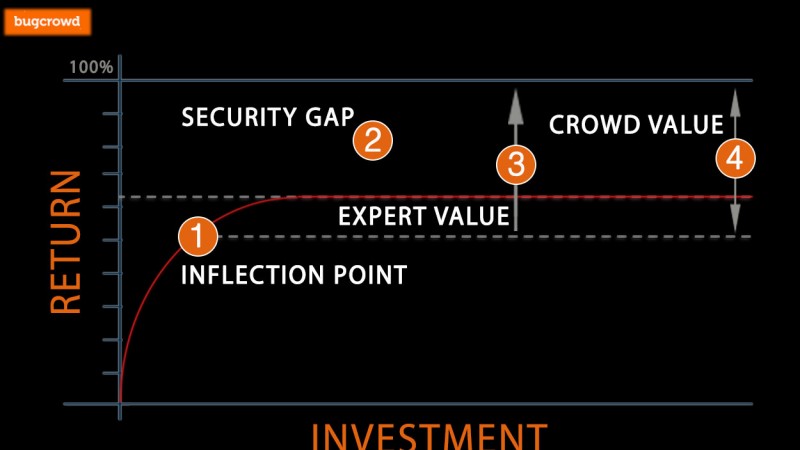

It could be said that the proliferation of automation is the defining characteristic of the last 100 years. In almost every area of our lives, we’ve found a way to leverage technology to increase our efficiency, freeing us up for higher-order tasks… The things we like to do, the things that are hard, the things we’re good at. A great example of this is the evolution of personal travel. What used to take an hour or more on foot now takes 10 minutes in the car (as long as you’re not on the 101 in the afternoon), and in the not-too-distant future we’ll have cars that drive themselves, freeing us up even more. In the security space, we can invest in automating tasks, such as running vulnerability assessments, scheduling static code review on code pushes, and deploying tools to analyze an otherwise overwhelming set of input data for quicker detection and more efficient response. Here’s the challenge: While people-generated problem sets can benefit a great deal from the help of automation, they can never be solved by automation alone. The process of building a business or product, along with the systems that support it, is fundamentally a creative one. People power it, and all of the strengths and weakness of human creativity are at its core (Interestingly, the process of identifying vulnerabilities to formulate and execute a malicious attack is also a creative one). We can apply the same creativity to make automation better but ultimately, computers do what they are told to do, and do it repetitively, cheaply and at scale. The automated processes and tools we use to identify where our businesses are vulnerable are good up to a certain point; right up until the need for human interaction kicks in. The bad news is that the “security blind-spot” this creates is also where the bad guys enjoy spending their time and efforts. Both JP Morgan Chase and Target are examples of what it looks like when this goes wrong. In both cases, the initial ingress points used by the attackers, a staging webserver and an external facing HVAC control portal, should have easily been identified as risky and flagged for remediation. In both cases, however, these systems ended up in an automation blind spot. The Heartbleed and Shellshock vulnerabilities existed in code for years, during which that code was audited countless times, yet still they persisted until they were found, and eventually exploited, by people. Automated processes and automation tools can take care of a lot of things for us. If you consider the “80/20 rule” automation is definitely responsible for getting us to the 80 percent mark. But whether it’s 80 percent or more, there will ultimately be an inflection point where the Law of Diminishing Return takes over (see #1 on the diagram below), and the return on our investments in adding or improving automation begin to taper off and flatten out.

Every piece of software, every system, every platform contains security flaws. So, what can we do about the 20 percent? How can we address this “security blind-spot” (see #2 in the diagram)? Attackers leverage the same automation techniques to gain the same efficiencies your company is trying to enjoy. After all, why would anyone want to spend time and money trying to break into something that has already been fixed? That’s where their efforts are focused—the things they figure you probably haven’t looked at yet. Attackers don’t stop at automation; they invest in human capital to take things to the next level. On the attacker side, there’s lots of people with lots of widely varied skillsets, many different motivations (not just money), and an incentive model that’s based on getting results. This isn’t to suggest that, on top of your automation suites, you should go out and hire 1,000 white-hats by the hour to go scouring through your systems. That’s not practical for most organizations. Instead, when you recruit or contract these people they should be working on defense, education and driving the residual value of your assessment down into your engineering teams and up into management where they can have a lasting impact. These experts will help close the gap (see #3 in the diagram above). The remaining gap (#4 in the diagram) is where all the really bad stuff happens—the stuff hackers find but which tools one or two high paid experts can’t. This is where the crowd comes into play. In this arena, humans become the automation. By leveraging a pay-for-performance model, and engaging the collective creativity of the security community via crowdsourcing, it’s possible to apply human capital in an efficient and cost-effective way to further bridge the gap between what’s possible with automation, what’s possible with a couple human experts, and the highest levels of security desired. The final lesson? Don’t get caught thinking that automation is a silver bullet, only to find yourself stuck with large and dangerous security blind spot. Instead, use the wisdom of the crowd, a group of highly qualified security testers that embody human efficiency to close the gap. Your potential attackers are looking at this gap with their human eyes wide open. They don’t only go after the low-hanging fruit identified by automation and commercially available tools. And, if they do, they won’t do it the same way you will. They will instead focus on the areas where they know you don’t focus. They too want to be effective and efficient. The bad guys are creating and proving the human automation model time and again—moving beyond automation the selective, focused and properly incentivized deployment of human capital.

About the Author: Casey Ellis is the CEO and co-founder of Bugcrowd, the innovator in crowdsourced security testing for the enterprise. He has been in the information security industry for 14 years, working with clients from the very small to the very large, and has presented at Derbycon, Converge, SOURCE Conference, and the AISA National Summit. Before relocating from Sydney Australia to San Francisco with Bugcrowd, he founded White Label Security, a white-labelled penetration testing company; and served as the CSO of Scriptrock. A former penetration tester, he likes thinking like a bad guy without actually being one. Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.

Meet Fortra™ Your Cybersecurity Ally™

Fortra is creating a simpler, stronger, and more straightforward future for cybersecurity by offering a portfolio of integrated and scalable solutions. Learn more about how Fortra’s portfolio of solutions can benefit your business.