I was on a security panel recently where we were asked to define the Internet of Things (IoT). This term is as vague as it is broad. It can be argued that it includes almost any “thing” that can be part of a network. I was not happy with any of our answers, including my own, so I spent some time thinking about it. When I was asked this question at a later conference, my reply was different. I answered that IoT is more about psychology than technology. Answers to the “What is IoT?” question often revolve around lists of examples. The lists have included:

- Smart thermostats

- Smart electric meters

- SCADA / Industrial Control Systems

- Factory automation assembly robots

- Self-driving cars

- All connected cars

- Wireless fitness devices and other wearables

- Connected insulin pumps

- Wireless pacemakers

- Home cleaning robots

- Internet-controllable lightbulbs

- Smart toasters

- Smartphones

- Home entertainment systems

- Computers

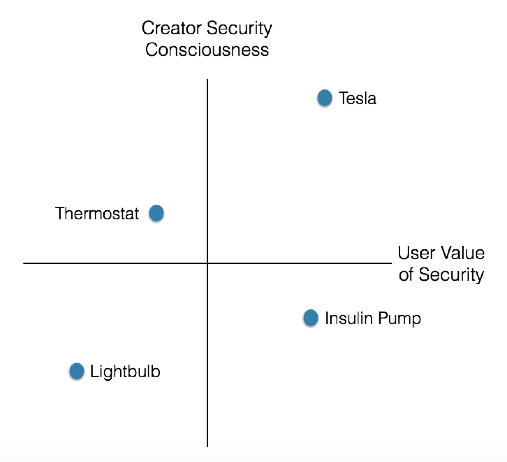

Is this list helpful to understand the problems of securing IoT? The list covers so many kinds of things and use cases that it is hard to see any unifying characteristics. Some of these things are designed with security in mind, while others hardly acknowledge the issue. Some are actively managed and maintained by a user or administrator. Others are installed and forgotten. We need to get a better sense of what we mean by IoT in order to have a useful conversation about the field. More specifically, for security discussions, we should define and identify the Problematic Internet of Things (PIoT). I believe that not everything on the list above should be included. We need to focus on the psychology of the creators and users of the devices rather than on the technical details of how they work or what they are designed to do. Desktop computers are on one end of the IoT spectrum. Both the creators and users of computers expect that they will be constantly updated, checked with anti-malware and other security tools, and otherwise maintained. Computers are designed for this kind of active management. Security in computers, while far from solved, is very much on the mind of all parties. Everyone knows that their valuable data and resources are at risk. Attacks on computers happen all the time. Compare that to a smart toaster (a canonical example which may not actually exist in the world). It is likely that neither the creator nor the user of the toaster has given much thought to the security implications of the toaster being hacked. They may not realize there might be impacts beyond over- or under-toasted bread. Of course, security professionals will see them as vulnerable devices inside the home network’s perimeter. Security professionals know they can be leveraged to gather information, capture network credentials, launch follow-on attacks, and more. It does not have a user interface for managing the toaster and checking its security state. Neither the creator nor the user has any expectation that the toaster will be regularly patched and updated. To most people, the very idea is absurd. A related idea is that security is not part of the perceived value of a device. Users do not demand security in their lightbulbs – consequently, they won’t pay extra for it, so creators (especially startups) don’t incorporate security into their initial design and architecture. The PIoT comes directly from these mindsets of the creators and users. The first assumption creators and users make is that their device won’t be attacked. They might think there is no reason to attack it (a smart lightbulb). Or they may think the device will not be attacked because their technology is not part of the public Internet (a SCADA device). Creators and users often assume that these devices will not, cannot, or do not need to be maintained. They are invisible to users, providing no interface for managing them. Connected cars are an interesting example of how a single type of “thing” could be part of the PIoT in some cases but not others. Most modern cars contain many different networks, including a Controller Area Network (CAN) bus, Bluetooth for phone integration, and wireless tire pressure sensors. Some even have a cellular connection like OnStar. Until recently, none of the operating systems connected to these networks could be updated automatically. Software updates and patches required a recall of every car that was affected. There was no way for a user to take responsibility for the security of their car’s networks and computers. This is now changing for some vehicles. Tesla, for example, has focused on cybersecurity from the very beginning. In addition to thinking about security in the design process, they also provide frequent over-the-air patches and updates to their vehicles. A Tesla is an actively managed and maintained device. From a psychological perspective, I would not consider the Tesla in my garage to be a PIoT device. On the other hand, my other cars (the ones that never get managed, maintained, or patched) would fit in that category. Interestingly, with the recent attention given to car hacks, the perceived value of vehicle cyber security is increasing rapidly across the board, but it may take a long time for the user demand to translate to new car security designs. For purposes of analyzing whether devices are part of the PIoT, we can consider them to live in a two-dimensional space defined by the security consciousness of the creator on one axis and the perceived end user value of security in the device on the other.

When the creator and user understand the importance of security, we generally see fewer IoT problems. These devices are carefully designed and easily manageable and are generally not part of the PIoT. In any of the other three quadrants, we run into problems. If users don’t demand security, it is likely to be left out, even if the creators appreciate its importance. If the users demand security and creators fail to see the need, security will be a bandage slapped onto a product at the last moment. It will only provide users with the illusion of protection, just enough to close the sale. All hope is lost when neither the user or the creator sees the need for security. The creators will not even make a gesture towards security. This is exactly what we see in things like smart lightbulbs, which seem to generate new vulnerabilities all the time. This analysis suggests some leverage points to improve the situation. Incentives (or penalties) could encourage creators to take security more seriously in the design of their products. Programmers without security experience could benefit from widely available tools to create safer products. This would make doing the right thing much easier. We also need to educate users on what they need to look for in order to protect themselves. While users often recognize the need for privacy and security, very few can tell the difference between strong security and snake oil. Underwriters Laboratory has just released a certification for IoT devices, but it will not be visible on any products, at least for now. Such a visible and prominent stamp would allow users to take security into consideration in their buying decisions. By considering the psychology of users and creators of IoT devices, we can craft better approaches and understand which of them are part of the PIoT. That can help improve the overall security of the IT ecosystem. None of these suggestions is a silver bullet but this mindset-based approach can yield substantial improvements, not only to the security of the devices themselves but also to the security of everything that touches our networks. And as we move into the future, the Internet of Things will soon become everything.

About the Author: Lance Cottrell founded Anonymizer in 1995, which was acquired by Ntrepid (then Abraxas) in 2008. Anonymizer’s technologies form the core of Ntrepid’s Internet misattribution and security products. As Chief Scientist, Lance continues to push the envelope with the new technologies and capabilities required to stay ahead of rapidly evolving threats. Lance is a well-known expert on security, privacy, anonymity, misattribution and cryptography. He speaks frequently at conferences and in interviews. Lance is the principle author on multiple Internet anonymity and security technology patents. He started developing Internet anonymity tools in 1992 while pursuing a PhD. in physics, eventually leaving to work on those technologies full time. Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc. Title image courtesy of ShutterStock