I have been in this business for over 10 years, specifically in the business of trying to ensure our critical infrastructure remains in a safe, reliable and secure state. After all, if our critical infrastructure were to fail, the implications could be huge. Since 2011, I think the real threat of large-scale attacks against critical infrastructure has hit mainstream media and continues to grow not only in coverage but in the sheer number. Just recently, malware/ransomware have become the talk of the town. There is a very real chance of bad actors taking control of our power grids, harming our wastewater treatment plants, and locking our systems until we pay someone to unlock them. Acknowledging these threats, it would be inconceivable that a company would not have a security posture… right? The truth is, from a security deployment perspective, the general consensus is that security enjoys a woefully small slice of a company’s overall budget.

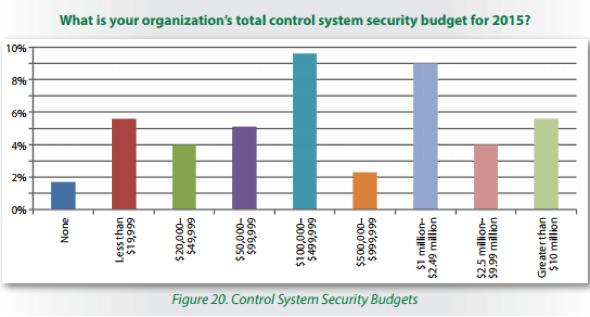

Source: IT Security Spending Trends, SANS Institute, February 2016 While this may look like a lot of money, think of companies like BP, Shell, Exxon, or the like. These are triple-digit billion dollar companies. This finding led me to pause and reflect. What follows are my top 5 thoughts on security implementation barriers in no particular order.

1. The Ostrich Algorithm

This is one of my favourite references from computer science. When I had to learn all about operating systems and schedulers, I always remembered this: “The ostrich algorithm is a strategy of ignoring potential problems on the basis that they may be exceedingly rare.”

The sticking point is "may be exceedingly rare." Essentially, the algorithm is to put your head in the sand and ignore any problems that may occur. I have heard this in discussions with customers many times. “Oh, it won’t happen to us” or “We are so small that it wouldn’t be worth someone’s time.” When considering this type of perspective, you have to think of the hacked contractor laptop that might have ransomware hiding on it. I think it is past the point where we can simply ignore possible external threats―we need to be proactive and plan a security posture.

2. A Central Firewall is Enough

There is a common misconception that having a single central firewall is all you really need. I have heard this many times, as well. In reality, it is never good to rely on a single point of failure, such as in a firewalling case. In fact, there is a reason that defense-in-depth has been a proven methodology for thousands of years. (I touched on defense in depth earlier.) Generally, if you have segmented your network in logical groupings of actions and protected each zone and conduit with a firewall, you could limit the impact of a potential issue and receive an alert pointing to where exactly the problem occurred. In the case of a single firewall, the network has no internal stop-gaps, which could allow a threat like malware to propagate easily. So, I have to discredit this argument.

3. Security Certainty

“Oh, we have a firewall” or “That area is air-gapped.” That's all well and good… until they put an additional firewall in place and find out X IP address from network Y is sending multicast information that is somehow making its way across the air-gap. How can this be?!

I think the hope is that just adding a firewall or just anomaly detection or just a DPI device is a panacea. Actually, securing your network has to be a concerted effort, always on-going and very pessimistic in nature; pessimistic in the sense of covering all your bases and leaving nothing to chance rather than convincing yourself that "Oh, it's fine. We have an air gap." In many cases, my experience has been that an integrator comes in and sets up a network, and then a third-party configures and sets up the OT portion. Then, in the end, it is up to the overall owner to manage the network. With all these disparate parties doing their part, the end customer does not have a true understanding of the form and function of the network but was told they have a firewall.

4. Cost

I often think of selling firewalling products (like Tofino) as selling insurance. Insurance costs money, and in the lucky cases, you will never actually end up using it. If you did need insurance and you did not have it, you would most likely be kicking yourself that you did not have it. That's because most incidents will cost far more in the end than if you had insurance in the first place. I hear the cost argument a lot. The truth is in many companies, the security budget is minuscule, especially compared to the cost of the PLCs and HMI software, which is ironic because one would think that if the controllers are the most important element for reliability, then the budget to secure them should surely be more (utopia?). While the up-front cost may seem expensive, if something negative were to happen to a production network, the lost revenue (in most cases) would far exceed the initial cost of a firewalling device that could have prevented the downage in the first place.

5. Ignorance

I do not mean this in a derogatory way. This is merely the sheer fact of “Where do I begin?” If you look at how many companies provide stateful firewalls, deep packet inspection firewalls, next-generation firewalls, anomaly detection firewalls, monitoring tools and change detection mechanisms, it can be a daunting task. Especially if you are the controls engineer who is an expert at controls but not a security or IT expert tasked with designing your security framework. As I mentioned above, you cannot just buy a device and BOOM, you are now secured. Job done. It has to be an iterative approach, one that takes a myriad of tools and processes to truly understand and secure your network. Coincidently (I swear), Belden does have this 1-2-3 approach of where to start to help guide a user or company along the path to securing their OT network.

6. (Bonus) IT enforcement in an OT world

You have an IT expert attempting to apply IT paradigms to an OT network. “Let’s just reboot that switch!” As we all know, the IT world does not have the same stringent requirements of uptime that an OT network has. I have seen cases where the IT and OT people cannot even sit in the same room, let alone devise a security policy that fits nicely in the OT environment. This is a very real barrier… one that only a gradual convergence of IT and OT can solve.

About the Author: Erik Schweigert leads the Tofino Engineering team within Belden's Industrial Cybersecurity platform. He developed the Modbus/TCP, OPC, EtherNet/IP modules and directed the development of the DNP3, and IEC-60870-5-104 deep packet inspection modules for Tofino security products. His areas of expertise include industrial protocol analysis, network security, and secure software development. Schweigert graduated with a Bachelor of Science in Computer Science from Vancouver Island University. Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.

Mastering Security Configuration Management

Master Security Configuration Management with Tripwire's guide on best practices. This resource explores SCM's role in modern cybersecurity, reducing the attack surface, and achieving compliance with regulations. Gain practical insights for using SCM effectively in various environments.