Most organizations are overwhelmed, understaffed, and/or underfunded when it comes to cybersecurity. These constraints create a critical need to prioritize on the most critical cybersecurity measures. However, often these priorities are unclear or hard to determine, leading to less-than-optimal cybersecurity product purchases and/or activities. This is because the metrics about which overarching cybersecurity priorities matter most are by-and-large not well-established or well-accepted by the cybersecurity industry – making it very difficult for customers to know what to do first and what is a “nice to have.”

Why does this matter?

Don’t we already have this problem solved? Clearly, a lot of time has been spent by various organizations to come up with 10,000’s of controls. However, anyone who has tried to implement cybersecurity across an organization has likely experienced that there are too many topics to cover and there are no good sources to explain what the top areas to focus on should be. In fact, many players in the cybersecurity industry’s “marketing machine” spend considerable effort to sell customers on one kind of product or another without really helping them with overall prioritizing. Customers can only do a few things. “I only have time to do the top 10 – but what are those?!” In order to figure out what those top 10 are for a customer's organization, we as the defender ecosystem need generally accepted structure and metrics.

Why is this hard? Hasn’t it been done before?

Unfortunately, like well thought-through answers, the answer to the question what is the exact number and prioritization is often “It depends…”. It depends on: What systems? What applications? What data? Scale of IT landscape? Functional aspects? Non-functional aspects? Financial/organizational constraints? OWASP, the Open Web Application Security Project, or the BSI Baseline Protection Manual catalogues, for example, give us a simple to-do’s based on concrete metrics because they cover a specific problem, use-case, and technology (web applications). So, if your cybersecurity needs to cover more than the covered point solutions (usually the case for enterprise cybersecurity, where a lot of the intelligence is in the system-of-systems “glue” between systems) – and you cannot go down a high-assurance architecture route either (usually the case for enterprise cybersecurity, as well) – then what? Numerous generic compliance frameworks, standards and guidance give a great broad overview of many things you may consider doing. The presented guidance is very detailed in some well-understood areas, but spotty in other, less clear-cut areas. Nevertheless, NIST 800.53 and similar frameworks are great guidance if you have the time and budget to get through it (or are mandated to use it).

What should you do?

Time and money (and also cybersecurity competency) limit how much you can do. Cybersecurity teams are often overworked, understaffed, fire-fighting breaches, etc. These limitations determine how far down the list of “to-do’s” you can realistically ever get. It is not the primary purpose of this article to postulate a “top 10 to-do” list but rather to discuss the needs and challenges to get to industry-wide vetted metrics of what matters most in cybersecurity (potentially with some adaptations based on industry, IT landscape, regulatory/legal environment, etc.) Let’s look at typical intrusion patterns to potentially guide us. Intrusion phases can be categorized into six phases[1]: Reconnaissance; Initial Exploitation; Establish Persistence; Install Tools Move Laterally; Collect; and Exfiltrate & Exploit. Attacks often start with finding holes in less-protected systems or tricking users into doing something to open up a hole. The attacker may try to get such a foothold in uncritical devices because they are usually less protected. There may be nothing to do/steal there, but it usually allows the attacker to move on laterally (“pivot”) to more critical assets, eventually getting access to valuable resources. It is important to distinguish the phases and appreciate how these phases are connected to determine the countermeasures that need to put in place. For example:

1. Countering initial exploitation: it is important to prevent as much in the early stages (initial exploitation) as possible, e.g. by using antivirus tools, email attachment scanners, good authentication etc. However, at the current state of the cybersecurity ecosystem, you need to assume that these countermeasures will fail at some point (e.g. “zero-day malware” which your antivirus tool doesn’t know yet). It could be that unsecured “smart” lightbulb that may be the starting point for the hacker. In fact, seemingly uncritical IoT devices are currently a major source of vulnerabilities (and have been used by hackers to cause a major internet outage in November 2016). Another major source of attacks is that some user in their organization will eventually click on a (spear-) phishing email attachment or website link – people are processing so much information every day that human errors are to be expected (even if only due to freak event circumstances such a names matching colleagues etc.), even for security-educated individuals. And sufficiently locking down email attachments or websites is not really feasible for most organizations either because that would reduce productivity. So you have to assume that some initial exploitation will happen eventually.

2. Countering pivoting (lateral movement): Therefore, in addition, you need to minimize the impact of such successful exploitations. A great way to do this is by implementing more fine-grained access controls across your networks, systems, devices and applications. Instead of giving particular users or devices broad access to much information and many systems, devices, and applications, you need to reduce access to the “minimum needed” to get the task done. This will likely involve contextual, dynamic, fine-grained access control technologies (for example “attribute-based access control”, ABAC) combined with security policy automation tools to make ABAC manageable[2]. This is contrary to the traditional “hard shell, soft inside” security model where firewalls are put in place to control who can get in and out of an enterprise network. With “bring your own device” (BYOD) and cloud computing rapidly used by organizations today, traditional trust boundaries are messy or non-existent, making “hard shell, soft inside” ineffective.

3. Knowing when it happens & impact control: Security is never 100%. So assuming that both those and everything else you put in place fail, you need to have tools (and people!) in place who can detect that you got breached. In addition, you need to figure out ways of how your organization will recover from a catastrophic hacker/failure event. Just to name a few examples, mirror sites, hot/cold backups, etc. are necessary, as well a way to restore systems to a clean state after being attacked.

How do you prioritize? Without clear metrics, it is hard to estimate how likely which kind of vulnerability and associated impact will be.

Some final thoughts…

It should be helpful to at least broadly structure major priorities based on a thought process. In particular, there are ongoing discussions in the cybersecurity industry about whether – and in which order of priority – you should:

- Prevent: One school of thought makes preventing breaches by reducing attack surface and vulnerabilities the “plan A”. This approach is usually followed by more mission-critical/safety-critical industries and military/intelligence.

- Detect and respond: Another school of thought around cybersecurity professionals is that prevention is relatively futile and you should rather make your efforts on detection and response your "plan A”.

- Control impact (recovery): Yet another (more extreme) school of thought thinks that both prevention and detection are quite futile and we should mainly focus on impact control and recovery.

- Sell it to management and auditors: And yet another school of thought thinks that the primary objective is to convince management auditors that security meets (compliance) requirements.

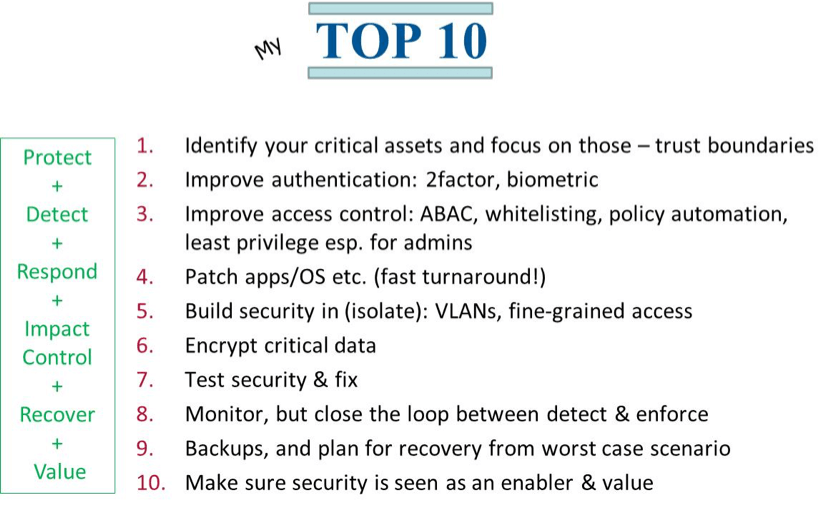

The author’s (personal) view is that prevention should still be “Plan A,” followed by “detect & respond," followed by impact control, and lastly sell it to management. But this is open to debate until we have more solid, generally accepted industry-wide metrics. Even so, here is my personal current top 10 list based on that rough prioritization[3]:

A more detailed whitepaper about this topic can be found at objectsecurity.com/whitepaper.

[1] https://www.youtube.com/watch?v=bDJb8WOJYdA [2] Contact the author if you would like to learn more about ABAC or security policy automation. [3] Initially presented by Dr. Lang at ToorCon 2016 San Diego

About the Author: Dr. Ulrich Lang is Founder & CEO of ObjectSecurity, a security policy automation company. He is a renowned access control expert with over 20 years in InfoSec (startup, large bank, academic, inventor, technical expert witness, conference program committee, proposal evaluator/reviewer etc.). Over 150 publications/presentations InfoSec book author. PhD on access control from Cambridge University (2003), a master’s in InfoSec (Royal Holloway). Co-founder, co-inventor and CEO of ObjectSecurity (Gartner “Cool Vendor 2008”), an innovative InfoSec company that focuses on making security policies more manageable. He is on the Board of Directors of the Cloud Security Alliance (Silicon Valley). Editor’s Note: The opinions expressed in this and other guest author articles are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.