In Part 1 of this series, I walked through the background of the NERC CIP version 5 controls and outlined what needs to be monitored for NERC CIP software requirements. In this second half of the series, we’ll take what we’ve learned and explore approaches for meeting the requirements while considering security value. NERC CIP is supposed to be for security, after all!

NERC CIP Tools

At a high level, Tripwire's allowlisting capabilties have many of the features needed for meeting the software monitoring requirements. But process is important, as well. Additionally, in some cases, there are multiple approaches to a requirement, so the entity gets to choose what fits best for their interpretation and process.

OS and Firmware Version Monitoring

OS version is generally tracked one of two ways, but both are easy. With a strict change management approach, Tripwire Enterprise can read the OS version and show if there are changes, which of course are very rare. A more scalable approach with policy is to also test the OS version, so the result flows into a unified view of compliance. Probably the slickest approach is to monitor the OS version with Tripwire's allowlisting. The OS version will be reported right along with other software, or the implementation can even be broken out to show OS version per its CIP part number.

Firmware

Firmware version is similarly monitored by reading it from the device. Add to that a test in Tripwire Enterprise, and the control can be represented in a graphical summary red/green compliance view. The main variance customers ask to accommodate is during firmware updates across a fleet of devices where more than one version would be considered acceptable. This use case, however, is easily accommodated with a policy test. Firmware monitoring gets particularly interesting when considering endpoints Tripwire cannot or perhaps should not connect to. Some substation endpoints cannot robustly support building and/or closing a telnet or SSH (secure shell) connection. In such a case, a trusted intermediary can be used to gather firmware version and other configuration details as well as relay the data back to Tripwire for unified reporting. (The options here could easily become another blog entry.) And finally, if a device is not reachable even by an intermediary tool, a person can paste the configuration into a designated location reachable by Tripwire. One customer even built a web front-end for this use case. Until all devices are somehow reachable in this case, the manual step unfortunately seems required, but at least the device configuration is evaluated by standard controls and reported alongside all other devices.

Intentionally-Installed Software

There is a two-pronged approach for this requirement. Most intentionally-installed software registers itself (on Windows) or is installed by RPM. Tripwire will capture these as designed. PuTTY, OSI Monarch and Oracle on Linux are examples that would not be captured by Tripwire out of the box as they don’t register with the OS. The so-called “additional software” feature simply would need to be configured to identify these applications. Internet Explorer can also be included in this scope, although most entities consider it as part of the Windows OS.

Custom Software: What Was …

Prior to WECC’s announcement (referenced in my first blog post) about how it was going to interpret the requirement, the effort across regions was to implement some method of searching key portions of the file system for executable files. Portions of the file system that typically house intentionally-installed software would often be excluded from the search. While this smaller scope is more scalable to scan, it is not as secure. Still, the scanning usually uncovers anywhere from 50 to 200 files per system, which then inspires a healthy discussion about how to manage the files — put them in a standard location for instance, or consider eliminating some. For one customer, the scanning for custom software had been in place for a few weeks on some production systems. When we looked at the scan history of one system, we could see an admin had done some PowerShell scripting on the desktop, placed some .ps files on the recycle bin and not emptied the recycle bin. Additionally, a major software package had been deployed in a non-standard location and also happened to include a few copies of PuTTY. Good to know! I encourage every entity to check with their region about how to interpret “custom” software. For non-NERC compliance purposes, I encourage customers to consider using the custom software monitoring control as it strikes a balance between scalable and informative. The population of executable files on a system can be “large,” but it’s also generally pretty stable. Thus, a monitoring approach based on change management tends to scale well.

Custom Software: What Is …

At least in western audit region of WECC, the guidance has been to not worry about the “may include scripts” language in the Guidelines and Technical Basis section of the requirement document and leave it to the entity to determine for themselves what scripts or applications should be listed as “custom.” In practice, the scope has amounted to a very short or zero-length list of in-house developed utilities. The cleanest approach I have seen is to use Tripwire's allowlisting software monitoring feature in such a way as to report just on these few, in-house developed utilities. This approach results in identical reporting as is produced for intentionally-installed software.

Security Patches

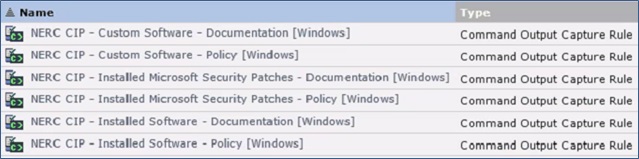

A staple part of the approach is to implement a rule in Tripwire Enterprise to list out the installed patches. This simple step documents system state with a listing of the patches and any day-to-day changes that work well for change management objectives. Patches can be listed in one block of text and tracked as such, or they can be tracked as individual items (elements), as per the preference of the entity. Customers sometimes also use third-party tools for patch reporting (e.g. WSUS for Windows or Satellite for Linux). Example Implementation of Tripwire allowlisting Software Rules for Windows:

Here is a summary of the approaches with comments about how widespread the approaches are:

Requirement Area |

Approach Options Summary |

Prevalence of the Practice |

| OS |

|

Both options are used equally. |

| Firmware |

|

The approach using a test is predominant. I am not aware of any entities reporting BIOS versions. |

| Intentionally-Installed (registered, or through RPM) |

|

This is the predominant approach. |

| Intentionally-Installed (not registered or RPM) |

|

This is the predominant approach. |

| Custom software |

|

In WECC at least, the trend is towards Tripwire allowlisting. Some level of system scanning for executables was the predecessor approach. |

| Security Patches |

|

The trend is towards reliance on allowlisting instead of third party tools or a Tripwire Enterprise-only approach. |

To conclude, for NERC-registered entities, the good news is that the problem of software monitoring has been solved. There are some interpretation and process issues to work out, but automation is at hand. You can get there from here! For non-NERC readers, if you have a security concern around what is installed in your environment, consider adapting an approach used by the electric utility community in a process that fits for your organization. Knowing what is actually in your environment is always the first step towards securing the environment. To read part 1, click here. Learn more about how Tripwire technology can help you achieve ongoing, audit-ready NERC CIP compliance by reading our case study on how Western Farmers Electric Cooperative guards their systems with Tripwire.

Achieving Resilience with NERC CIP

Explore the critical role of cybersecurity in protecting national Bulk Electric Systems. Tripwire's NERC CIP Solution Suite offers advanced tools for continuous monitoring and automation solutions, ensuring compliance with evolving standards and enhancing overall security resilience.