One of the main issues I find across the information security industry is that we constantly need to justify our existence. Organizations have slowly realized they need to spend on IT to enable their businesses. Information security, on the other hand, is the team that is constantly preventing the business from freely doing as they please. IT is seen as a driver of success, and security can be, too. The security team just needs to learn how to enable the business. What businesses do understand is risk. If they understand the risks that they associate with a certain action, they will likely think twice before freely gallivanting in the www (the wild wild web). https://www.slideshare.net/Tripwire/vulnerability-management-myths-misconceptions-and-mitigating-risk-part-i

Cybersecurity Metrics in Business Context

As such, we as security professionals need to ensure we are providing data to the business, so they understand what it is we do and how it is we go about protecting them. The question herein is: What metrics should we provide? As technical folks, it’s easy for us to get caught up in the details. What we often forget is that executives and business-minded individuals have no idea what we’re talking about. They just smile and nod, but when it’s time to pull out the chequebook to fund an information security project, they won’t be able to justify the cost. Next time you are building an executive metrics deck, keep this in mind: If they don’t understand how you are saving them money, they won’t give you money to fund your projects.

Vulnerability Management Metrics

One of the foundational areas for a security program is vulnerability management (VM). This blog post will focus on specific metrics that you should be looking at as part of the vulnerability management program. When talking about VM, it’s very easy to get caught up in scoring, counting the number of vulnerabilities, and counting the number of missing patches. Each of those has their place, but it’s important to know your audience before presenting each of these numbers. An executive audience is looking for trends over time and essentially wants to know that the organization is effectively and efficiently reducing the risk. A more technical audience will want to know exactly how much of what effort they need to put in to reduce that risk.

Executive-Level Metrics

One of the first things an executive team would like to see is how the organization is trending overall. This answers questions such as:

- What is our baseline?

- Are we getting better, or are we getting worse?

- What is our target risk posture, and are we within that target?

Risk Scoring in Vulnerability Management

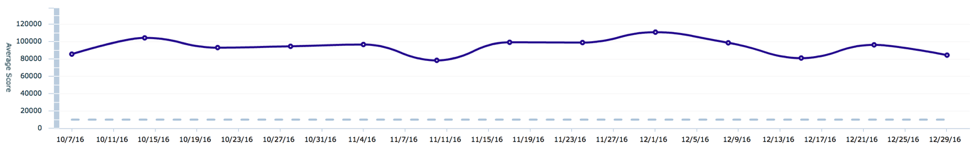

To answer these questions, the first graph I would like to show is the average host risk score over time. The risk score in this scenario is the Tripwire Risk Score. In short, the Tripwire Risk Score takes into account how easy a vulnerability is to exploit, what privilege an attacker would get upon successful exploitation and the age of the vulnerability. The risk score for each host is the sum of the score of all vulnerabilities present. For the executives at this point, the key from this graph is to see whether or not the risk score is going up or down.

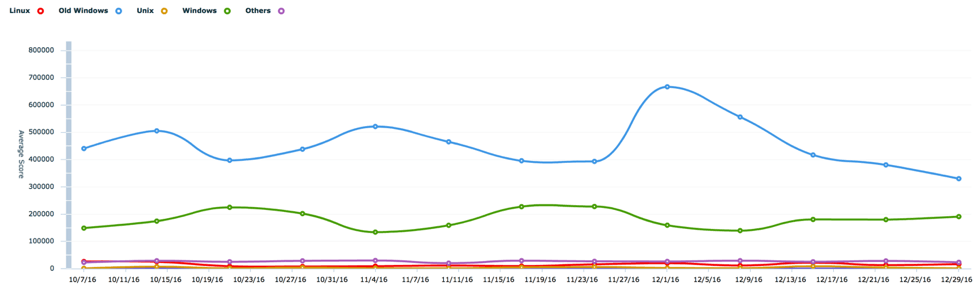

What we see here is that the overall vulnerability risk has stayed relatively steady over the last 12 weeks. For further details, we can use the same metric broken down into the individual management groups within our organization. In this example, the organization is broken down by ownership of the operating system type.

Here we see that the two greatest contributors to the risk of the organization are old Windows operating systems that are no longer supported, as well as our current Windows operating systems. To greatly reduce the risk posture of the organization, we can start a project to migrate off of the older, unsupported operating systems. Furthermore, we see that the vulnerability risk in each of the areas has remained stationary. We can set goals to reduce this risk depending on the risk tolerance of the organization, as well as the aggressiveness with which the executives would like to reduce the risk.

Vulnerability Management Program Maturity

Typically, organizations in the early stages of their vulnerability management program have average Tripwire Risk Scores well over the 20,000 range. Meanwhile, very mature organizations are able to keep their risk scores below 5,000. In order to get to that level of maturity, most organizations will set risk reduction targets between 10-20 percent year over year. This allows their teams to focus both on remediating existing risks, as well as keeping up-to-date with current patch levels as new threats emerge.

The Right Way to Prioritize Vulnerabilities

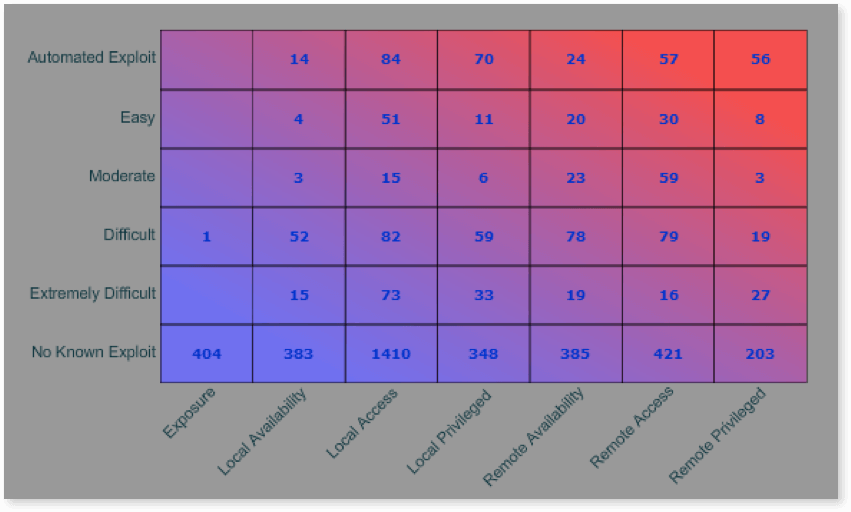

Now that we have seen how our organization is trending, let us dive further into the specific metrics that give us a deeper understanding of our current posture. The first metric we will look at shows a heat map of the criticality of vulnerabilities based on the ease of exploit and privilege an attacker can gain upon successful exploitation of the vulnerability.

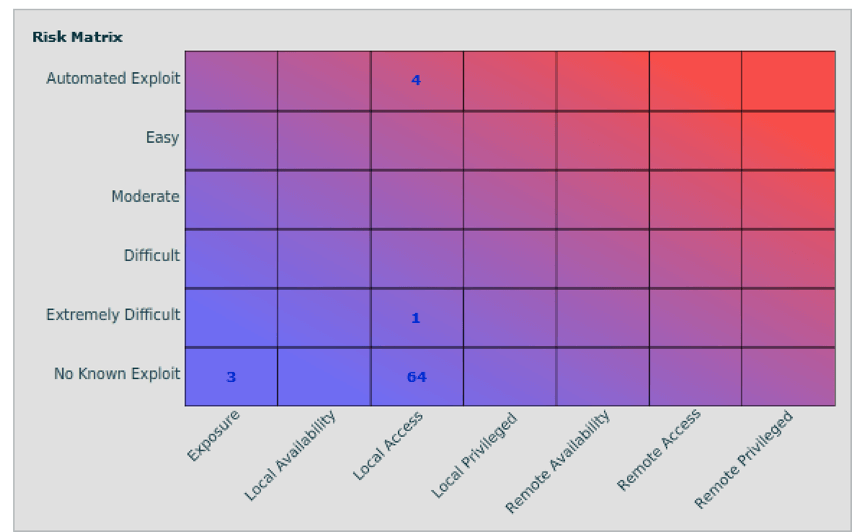

The first priority of remediation should be the vulnerabilities in the top-right corner of the matrix, as those vulnerabilities pose a risk of an attacker being able to remotely exploit the vulnerability with minimal effort. These are the “low hanging fruit” that should be immediately addressed. Following that, any vulnerability with an automated exploit should be remediated, as minimal effort is required for successful exploitation. This particular example shows the vulnerability numbers across the organization, but they can easily be filtered to show the same matrix for only vulnerabilities assigned to a particular owner. Similarly, filters can be applied to only show the results for a particular application. A common metric desired by organizations is to know how many vulnerabilities are present in Java and Adobe applications. Below is the same matrix showing only the vulnerabilities for Java and Adobe.

Teams that are typically overwhelmed by remediating hundreds of vulnerabilities can focus on the ones that will have the greatest impact to the organization. In this particular example, they can focus on remediating the four vulnerabilities that have automated exploits associated first before moving into remediating other vulnerabilities.

Other Cybersecurity Metrics to Be Aware of

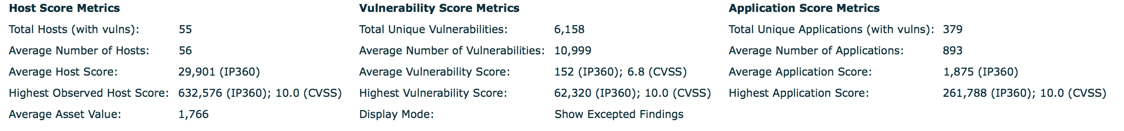

At this point, executives would have a pretty good idea of how the organization is trending from a vulnerability risk perspective. In the event that they would like some more information, some other key metrics to consider are host, vulnerability and application metrics. Below are some examples:

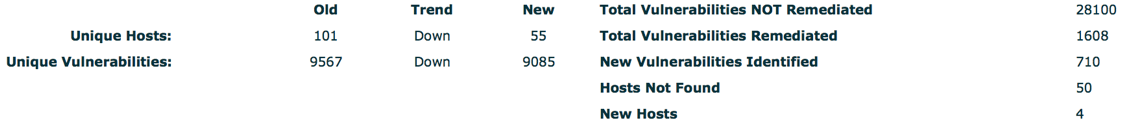

It is also important to identify the trend of hosts and vulnerabilities identified over time. The example below shows the difference between two points in time across the entire organization.

We can see that while there were a high number of vulnerabilities not remediated, the team did make progress in remediating 1,608 vulnerabilities. These numbers can also be further broken down by department or system owner based on the requirements of each specific organization. That’s all for now. In Part 2, we will look at some of the operational vulnerability reports that can help technical teams focus their remediation efforts on reducing the risk to the business. In the meantime, you can read our Vulnerability Management Buyer’s Guide.

Meet Fortra™ Your Cybersecurity Ally™

Fortra is creating a simpler, stronger, and more straightforward future for cybersecurity by offering a portfolio of integrated and scalable solutions. Learn more about how Fortra’s portfolio of solutions can benefit your business.