LinkedIn says it is beefing up its security in an attempt to better protect its user base from fraudulent activity such as profiles that use AI-generated deepfake photos, and messages that may contain unwanted or harmful content.

The new features, which are being rolled out globally over the next several weeks, have been previewed in a blog post by LinkedIn's Vice President of product management, Oscar Rodriguez.

Better security can't come soon enough, as criminals have long exploited LinkedIn to identify and connect with unsuspecting individuals within targeted companies, with the intention of conducting fraud, stealing information, and planting malware.

LinkedIn clearly recognises this has become a problem, hence its introduction of new security features.

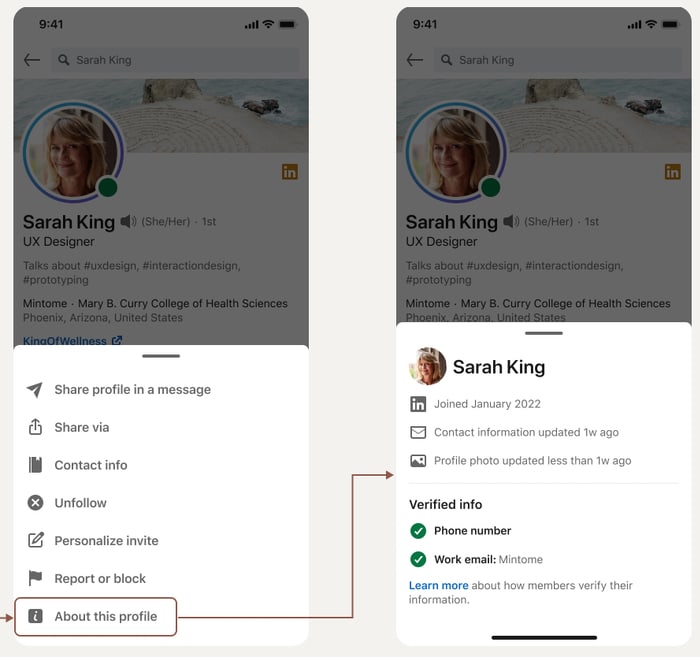

"About this profile"

Every LinkedIn member's profile page will soon have a feature called "About this profile" where users can find out when a profile was created and last updated, as well as whether the profile's owner has verified a phone number or work email address.

The idea is that this should give users an additional data point to help them decide whether they should trust a LinkedIn profile or not. For instance, some who joined the site five years ago might be considered more trustworthy than a profile that was created five days ago.

LinkedIn says that initially only workers at a "limited number of companies" will be able to verify their email addresses, but that it expects to expand the facility over time.

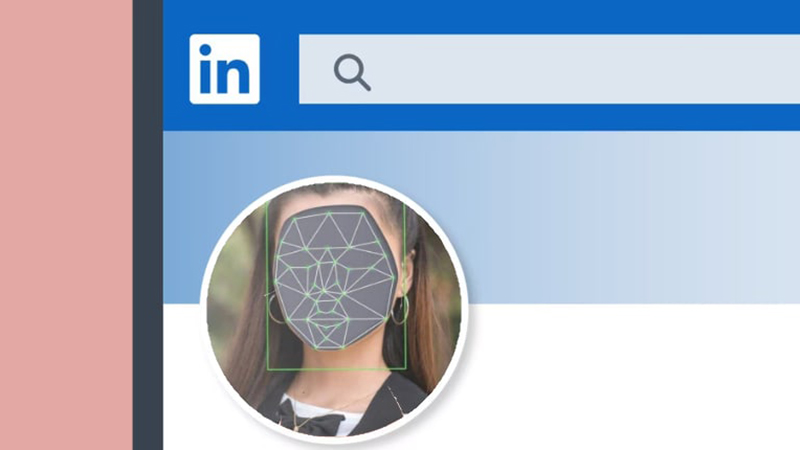

Detecting fake accounts using AI-generated profile photos

LinkedIn also says that it is responding to the rapid advances in deepfake or "AI-based synthetic" image generating technology, that can be used to create convincing-looking profile photos that can be used to make LinkedIn accounts appear more authentic.

To combat this, the site says that it has introduced a "deep-learning-based model" using "cutting-edge technology" that proactively checks uploaded profile photos to determine if they are AI-generated or not.

The need for some form of defence against deepfake photo profiles can't be emphasised strongly enough.

Earlier this year, a study by digital media forensics experts discovered that not only are AI-generated profile pictures being used for the purposes of financial fraud "indistinguishable from real faces", but they're also considered "more trustworthy."

Researchers at Stanford Internet Observatory discovered more than 1000 LinkedIn profiles were using deepfake facial images created using artificial intelligence.

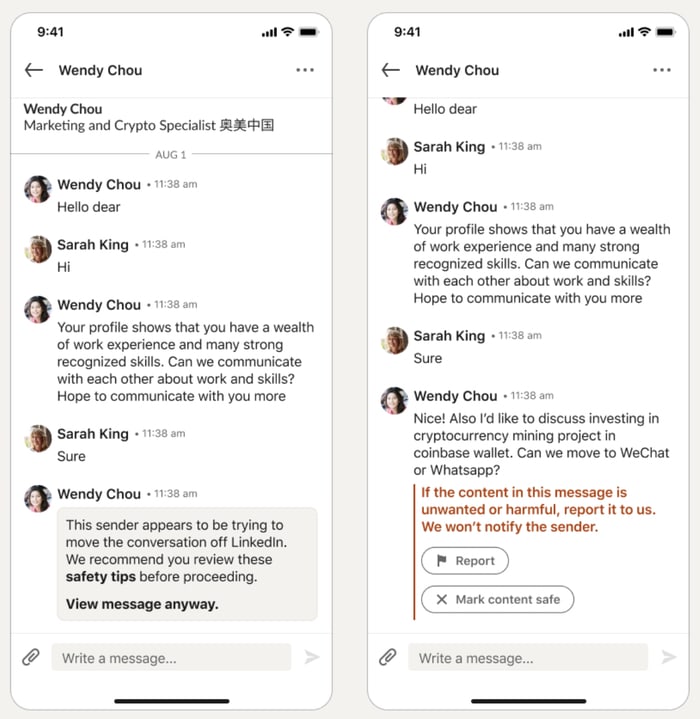

Warning about suspicious messages

LinkedIn says that it will warn users when they receive messages that may put them at risk, and give them the opportunity to report the content without letting the sender know.

For instance, if you receive a message from someone who then asks you to continue the conversation on an instant messaging service such as WhatsApp, it's possible that they may attempt to then send you a malicious link that might have been intercepted if the conversation has remained on LinkedIn.

In the past LinkedIn has warned users to be wary of messages from people they don't know who ask them for money, job opportunities that sound too good to be true, or romantic messages.

In addition, the site has asked users to report any content or profile they believe may indicate a scam. Tell-tale signs, according to the site, include profiles with abnormal images or incomplete work histories, or if you have no connections in common or anything else that looks out of place.

It remains to be seen how good a job LinkedIn's new features will do at protecting their many millions of users, but anything that makes the life of cybercriminals more complicated has to be welcomed.

Editor’s Note: The opinions expressed in this guest author article are solely those of the contributor, and do not necessarily reflect those of Tripwire, Inc.

Meet Fortra™ Your Cybersecurity Ally™

Fortra is creating a simpler, stronger, and more straightforward future for cybersecurity by offering a portfolio of integrated and scalable solutions. Learn more about how Fortra’s portfolio of solutions can benefit your business.