I recently introduced a three-part series about injecting security hygiene into the container environment. For the first installment, I provided some background information on what containers are and how the container pipeline works. Let's now discuss how we can incorporate security into the pipeline.

Assessing Images Before Production

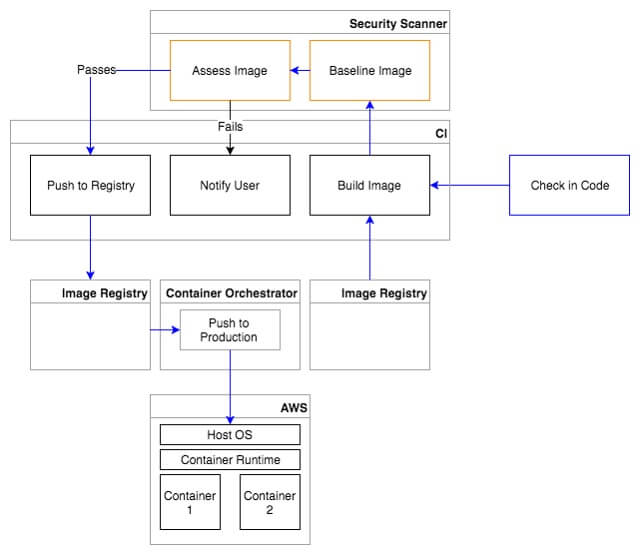

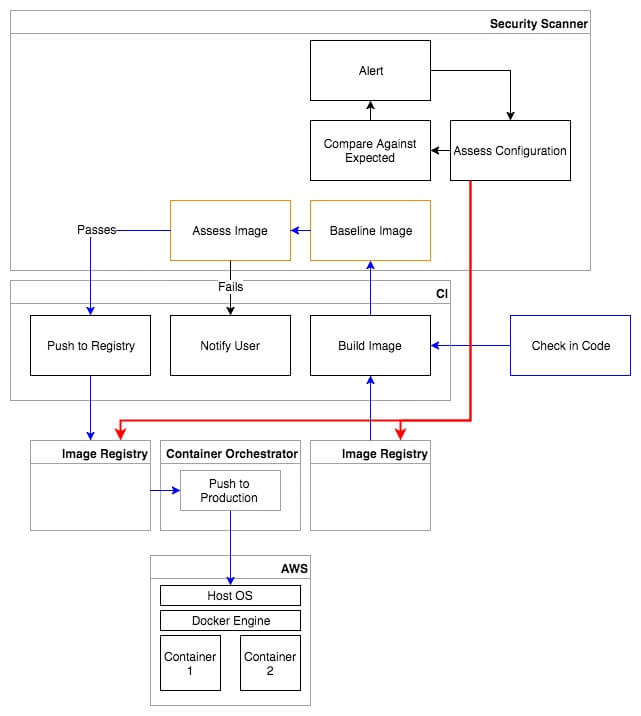

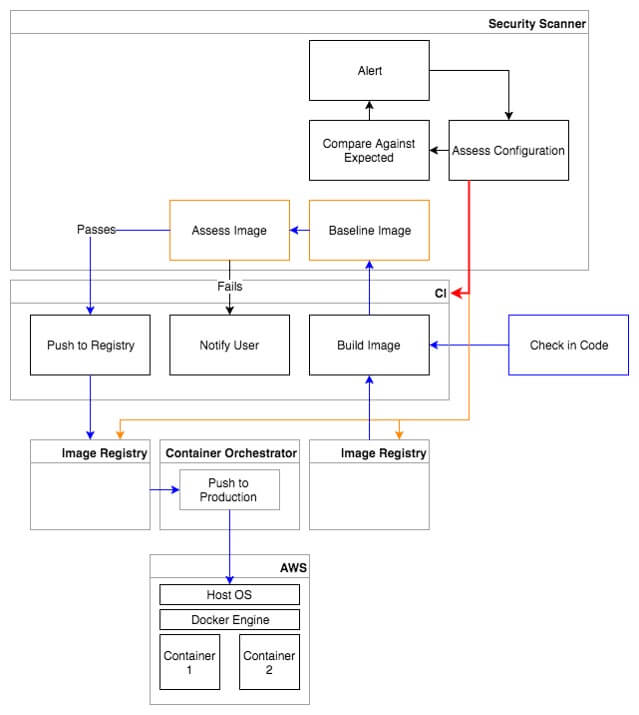

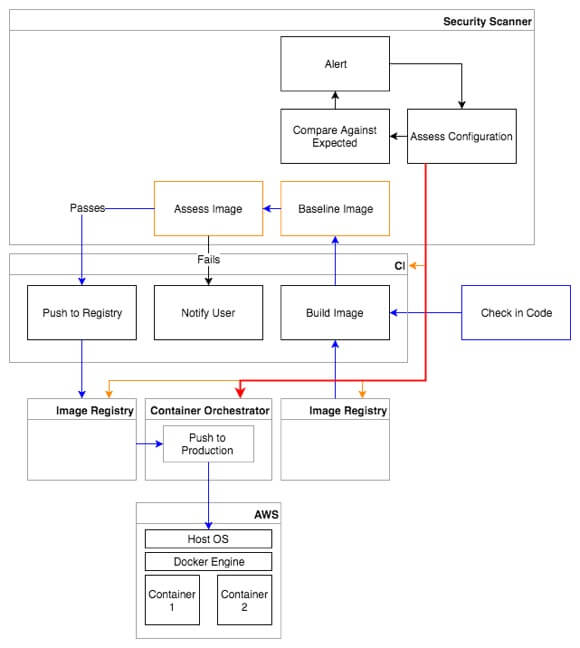

To secure the pipeline, the first thing we can do is bring a security assessment tool into the build process. Instead of having your continuous integration tool build your image and immediately push it into a registry, the image should first be pushed into a security tool that can assess that image for vulnerabilities and misconfigurations. Based on a policy of your organizations choosing, the image should be passed or failed, and only passed images should be pushed into the production-ready image registry. This ensures that security assessments take place at the earliest stages of your container development and that at-risk containers never have a chance to make it out into production.

Container Registries

Now let’s talk about the container registries. As a part of your build process, the bottom parent image layer will be coming out of a registry. Instead of pulling from a public registry, you should consider using a private registry that only has images that have been approved by your organization and pre-assessed for vulnerabilities and misconfigurations. Once the final image has been built and assessed, it will go into another private image registry. It's important to ensure that you’re using secure private registries by requiring all connections to them be SSL-enabled to protect your images in-transit. I would recommend setting up authentication on your registries as an additional layer of protection. Use Docker Content Trust, as well, so that clients pulling images from your registries can validate the images and ensure they haven’t been tampered with. You’ll also want a security assessment tool to continually monitor your private registries to ensure that the only images that exist in them are those that have been assessed and approved.

Continuous Integration

Continuous Integration tools are often overlooked by security teams as a critical component in the container pipeline. Your CI tool will potentially have access to your code repository, production container registries, access to the security assessment tool, and potentially even access to your cloud platform itself. To give an example of the dangers that could exist here, a new attack vector was presented by SpaceB0x at DEF CON 25 where he was able to use a victim’s public GitHub repository to gain access to their Azure environment and deploy new networks within it without any interaction from the victim. He did this by submitting a pull request to their public GitHub repository that contained some changes to their build scripts. This triggered a build automatically via a WebHook, thereby executing his code and gaining root access to their build system that contained credentials for Azure. He even created a tool called CIDER (Continuous Integration and Deployment Exploiter) to automate exploiting and attacking CI build chains. https://www.youtube.com/watch?v=mpUDqo7tIk8 So, what lessons can we take away from this? Aside from not triggering builds of code that haven't been code-reviewed, especially from unapproved users, don’t use your CI tool for container deployment. Use it to build images and push them to a registry, and then use a separate orchestrator to pull those images and deploy them. This way, your CI tool will be incapable of making API calls to your cloud platform. Outside of basic security hygiene and RBAC, I would also recommend monitoring the CI tool’s build jobs for integrity. A change to a build job could inadvertently cause the security assessment process to be bypassed in some way, or a new build could be created or an existing job modified that pushes unwanted images into your production image registry.

Container Orchestrator

The container orchestrator will be responsible for pulling containers out of your container registry and pushing them out into production, among other things. A compromised orchestrator could mean anything from unwanted containers running in production to containers running in an insecure configuration. You should assess your orchestrator against benchmarks such as CIS to ensure that it’s configured securely. Orchestrators like Kubernetes typically have tools you can use to further secure your container environment, so it's important that you learn about best practices for your tool of choice. For example, Kubernetes provides the ability to create administrative boundaries between resources, set up network segmentation, and apply security contexts to your containers. As one example of how you might want to use Kubernetes to further secure your containers, NIST recommends that you assign sensitivity levels to your containers and only have containers of the same sensitivity level running on the same Host OS kernel to limit the impact of a compromised container. You wouldn’t want a container handling financial data to be running on the same Host OS as one running the publically exposed Web UI. Kubernetes provides the ability to apply labels to pods and nodes, which are essentially key-value pairs. You could use these labels to assign a sensitivity level to your nodes and pods and ensure that your pods are only deployed onto nodes with the proper sensitivity levels using nodeSelectors or node affinity.

Conclusion

Stay tuned for my next installment when I discuss how to secure the container stack. You can also find out more about securing the entire container stack, lifecycle and pipeline here. Catch up with the full series here: