There’s a Russian proverb “overyai, no proveryai.” (Trust, but verify.) You trust your IT department to keep your systems up and running and configured in a secure manner. But, do you verify those configurations? Often, in the rush to get things done quickly, some things slip through the cracks. And most often, security seems to be what ends up between the couch cushions. Without a security policy for each platform running in your environment, the security and availability of your systems may be compromised.

Security Controls are Important for Security Hygiene

This is why an important part of the security hygiene at many companies includes setting security controls for the operating system and applications running on these systems. As you know, setting the configuration controls for login, auditing, least privilege, etc. is just the first part in securing the environment. Testing your systems for secure configuration settings on an ongoing or continual basis has become the next part of proper security hygiene. There’s a reason CIS, NIST, ISO, and others have published standards for securing the platforms that you use and expect you to keep the systems in this state of being securely configured. These standards extend into the cloud, as well. After all, whether you’ve created an internal cloud to meet demand or reached into the public cloud to take advantage of Amazon, Microsoft, Google or others to buy computing resources, those platforms need to be configured securely. Oftentimes, you’ll be using a tool, such as Chef or Puppet, to configure these cloud resources, and they do a great job in setting things up both in the cloud and on-prem.

Taking Security Controls in to the Cloud

But here’s where the "Trust but Verify" adage comes into the equation: even though you trust that your configuration tools are setting up the OS and applications correctly and your IT staff has carefully gone through these settings to ensure they’re correct, has an independent group verified that the systems are actually being configured securely? Do you have an independent verification step in place? Has something slipped through the cracks into the couch cushions? You need to verify your security controls across both on-premises and cloud assets. Cloud providers give you the resources, but they are generally still configured with the out-of-the-box configurations when you spin up an instance of an OS platform. A rented version of Red Hat Linux system in any of the public cloud services is still out-of-the-box Red Hat Linux. Test that system against the CIS security standards for that Red Hat release, and you’ll find that it’s passing just under 50 percent of the tests. Windows needs a comparable amount of remediation to be considered secure. Funny, that’s exactly the same as the on-premises resources you’ve been dealing with since forever.

Treating your cloud the same as on-premises

Did you think that just because another company is supplying you with the resources that they were going to secure them for you, as well?

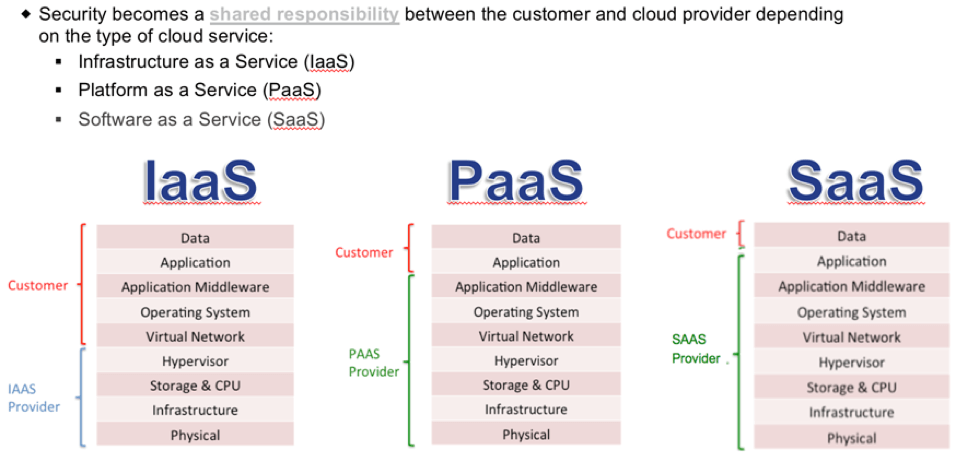

In nearly every discussion about security in the cloud, we must cover the zones of security. What am I responsible for securing, and what is the cloud provider responsible for securing? The controls in the case of PaaS are the configuration settings of the platforms. When you spin up a Red Hat instance in Azure without built-in applications (you’re going to install applications onto the platform), you’re responsible for securing that platform. The cloud provider is giving you a platform to work with, and you’re responsible for securing both the application and the data. The best way to protect your data and application is to always encrypt anything you put in cloud storage. That way, deletion can include digital-shredding. In a cloud environment, that’s the only way you can guarantee what happens to data on resources you’ve terminated. If you’re spinning up an application running on some platform (Oracle DB, MS SQL DB, Apache, etc.) where the cloud provider has locked down the OS so that you cannot make changes to the underlying system and access the instance only through API’s for the application (DB calls over port 1521 for Oracle), then you’re running a SaaS type product. In this case, you’re responsible for securing the data inside the application but you cannot touch the underlying OS. Using the API to gather the configuration settings and testing them against a policy is one thing you can do. Another is to encrypt whatever data is going into the SaaS. If the data is encrypted, it should be useless in the event it’s stolen. If you shut down the service, the data is useless. (Consider digital shredding – just toss the keys.) Having a verification step for the configuration settings of the database, and ensuring encryption is turned on for your SaaS product are good controls to put in place. Once you are done testing for these controls in the cloud, it would be a good idea to put these same controls into place for your on-premises applications by checking your security control settings for Apache, Oracle, MS SQL Server, Postgresql, etc. Also, start encrypting data in the on-prem environment, as well.

Is everything in the cloud and on-premises configured securely

You might trust that your OS and applications are configured securely, but have you verified that? Do you have something that is pulling in all of those controls and testing them to ensure they are configured properly? Both in the cloud and on-premises? A view of your controls in your on-premises environment gives you one large data point for your current risk posture. Similarly, a view of your controls in the cloud yields another large data point for risk in assets you’re responsible for securing. Having a view across both environments can give you lots of information for assessing risk across the entire environment. The cloud environment is typically more dynamic than an on-premises environment. This makes measuring the effectiveness of your controls different for the two environments – even if you’re using Tripwire to test the compliance with your standards across both environments. The Tripwire customers I’ve been working with that are testing and measuring their effectiveness in maintaining security controls have been taking a similar approach to their cloud environments. The cloud assets tend to come and go on an as-needed basis. Some last only an hour, while others remain up for months. Regardless of lifespan, a new cloud instance should come up in a secure state – that is, all controls should be set and configured securely right from the start. Cloud assets should be secure from the start, and the configurations of a running asset should never change. When an update to the OS or application is required, that should be created in a new template and tested. If all goes well, then the old instance will be destroyed and the new instance created containing the updates. In this type of cloud deployment lifecycle (typical when using DevOps methodology), any detected change to a running instance should be treated as a security incident. Compliance must be monitored on a continuous basis. Essentially, change should be detected in near real-time and a new compliance check should be done when a change is detected. If change is never allowed to a running instance, an incident should be generated as well. In some cases, cloud assets that fail a compliance (or vulnerability) check at instantiation can even be stopped automatically by calling the Cloud Management Console via an API. The Tripwire Enterprise console can also generate an alert to the security and DevOps teams with information on the failure. In theory, a newly instantiated instance should never fail a compliance check, and you can trust that it never will. But it's still important to always verify. On-premises assets are often configured correctly when initially deployed, but then configuration drift occurs and there’s a need for re-verification and remediation. On-premises should be monitored on a continuous basis. There is often a time-frame set for how long an on-premises device can be out of compliance before being remediated. If your cloud assets are being used the same as on-premises devices, you’ve just moved the applications to the cloud with the same processing. Then the processes can be exactly the same for both cloud and on-premises assets. Still, this is not the case for most cloud deployments I’ve seen in the past year. A DevOps process is often used with the cloud assets. The need for verifying configuration controls in the cloud is just as important, if not more-so, than verifying configuration controls in the on-premises environment. Both should be monitored on a continuous basis. The processes to set in motion when a failed configuration is detected will often be different, but knowing which ones will come up is most of the battle. To learn more about how Tripwire can help with extending your on-premises controls to the cloud, click here. To learn more about staying secure in the cloud, find out what 18 experts advise for effective and secure cloud migration, here.